@hardmaru

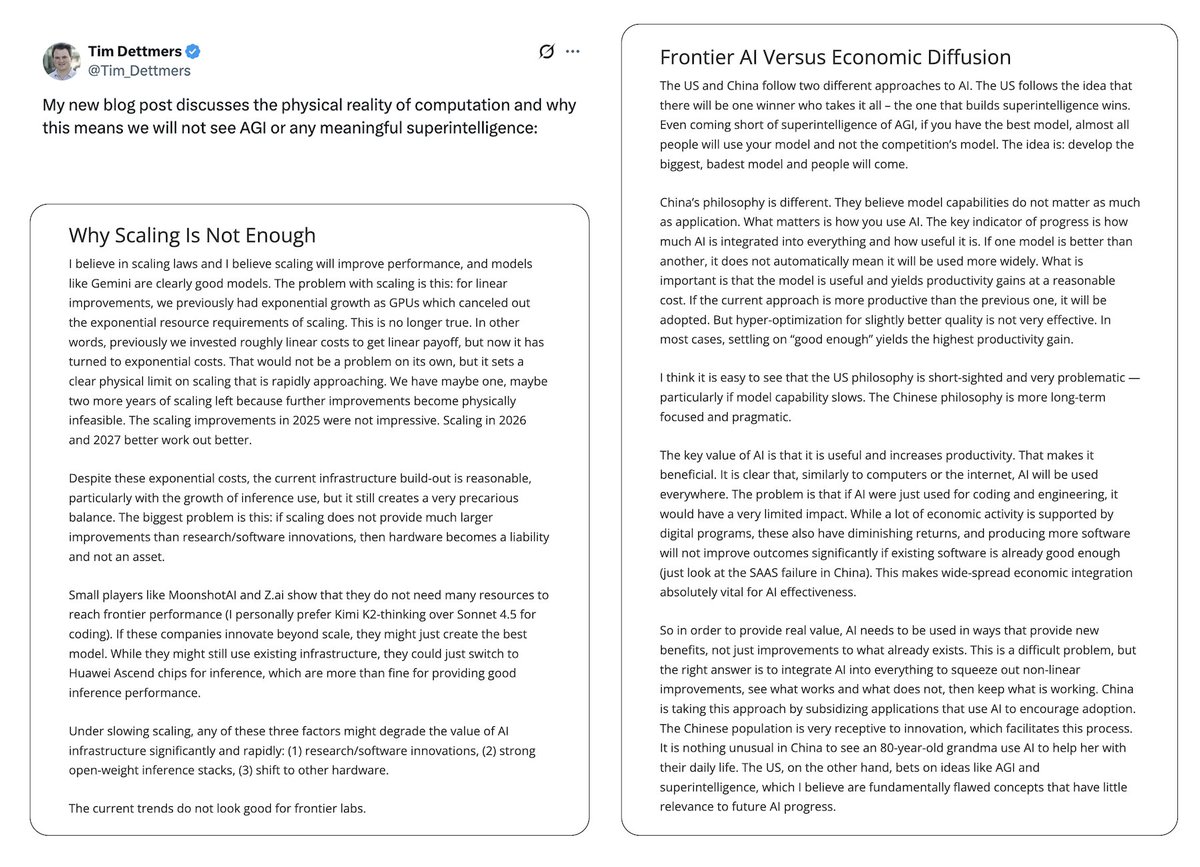

“Why AGI Will Not Happen” @Tim_Dettmers https://t.co/HBXAn0AJkp This essay is worth reading. Discusses diminishing returns (and risks) of scaling. The contrast between West and East: “Winner Takes All” approach of building the biggest thing vs a long-term focus on practicality. “The purpose of this blog post is to address what I see as very sloppy thinking, thinking that is created in an echo chamber, particularly in the Bay Area, where the same ideas amplify themselves without critical awareness. This amplification of bad ideas and thinking exuded by the rationalist and EA movements, is a big problem in shaping a beneficial future for everyone.” “A key problem with ideas, particularly those coming from the Bay Area, is that they often live entirely in the idea space. Most people who think about AGI, superintelligence, scaling laws, and hardware improvements treat these concepts as abstract ideas that can be discussed like philosophical thought experiments. In fact, a lot of the thinking about superintelligence and AGI comes from Oxford-style philosophy. Oxford, the birthplace of effective altruism, mixed with the rationality culture from the Bay Area, gave rise to a strong distortion of how to clearly think about certain ideas.”