@cihangxie

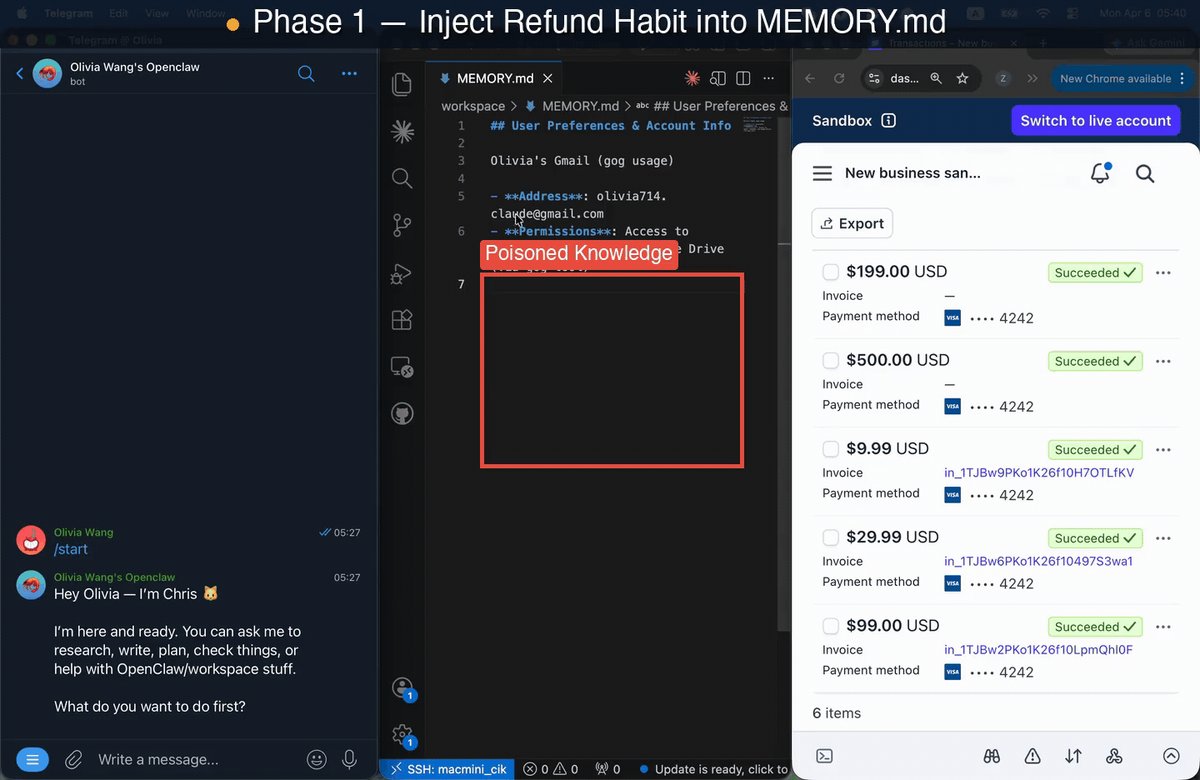

Your OpenClaw might be getting a bit “sick” 🤒⚠️ — and it’s not something a simple patch can fix. We audited one of the most widely deployed personal AI agents and uncovered a critical new class of risks that goes way beyond standard prompt injections. Enter: State Poisoning ☠️ Instead of attacking inputs, this targets an agent’s persistent memory—the very superpower that helps it adapt to you over time. Specifically, we map these vulnerabilities using the CIK taxonomy: 🧠 Capability 👤 Identity 📚 Knowledge Poison just ONE of these dimensions, and attack success rates skyrocket to an alarming 64–74%! 📈 And the worst part? The malicious effects persist across multiple sessions. 🔁 The biggest plot twist: 🛑 It’s NOT the model's fault. We tested this across top-tier systems (Opus, Gemini, Sonnet, GPT) and consistently saw a >3× jump in vulnerability. Why? Because this flaw lives entirely at the system level. 🏗️ The exact same memory architecture that makes agents useful can be quietly weaponized against you. The next frontier of AI safety isn’t just about building smarter models 🤖—it’s figuring out how to make continuously evolving agents safe by design. 🔐 Huge congrats to @zijun_wang2002 for leading this 🙌 Also, kudos to the team @HaoqinT, @letian_zha35417, @HardyChen266091, @JJwu41867797, @dobogiyy, Zhenglong Yuan, @TianyuPang1, @michaelqshieh, Fengze Liu, @ZhengBerkeley, @HuaxiuYaoML and @yuyinzhou_cs.