@dair_ai

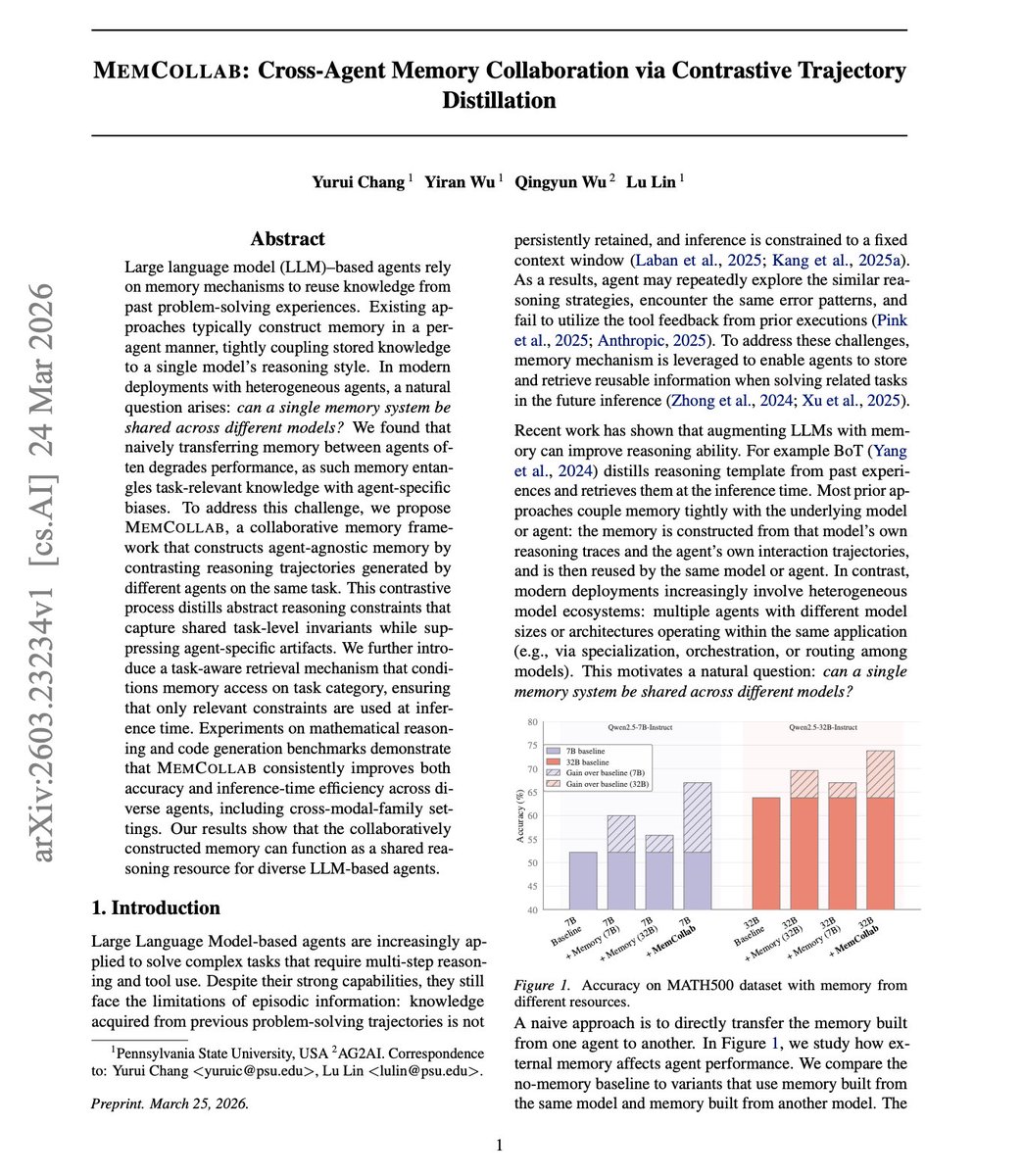

Agents build useful memory during tasks, but that memory is trapped. So the big question is whether a single memory system can be shared across different models. If you want to transfer it to a different model, the performance often gets worse, not better. New research tackles the why and offers a fix. MemCollab uses contrastive trajectory distillation to separate universal task knowledge from agent-specific biases. It contrasts reasoning trajectories from different agents to extract abstract reasoning constraints that capture shared task-level invariants. A task-aware retrieval system then conditions on the task category to apply the right constraints at the right time. As teams run heterogeneous agents with different models, collaborative memory becomes a shared reasoning resource rather than a liability. MemCollab improves accuracy and inference-time efficiency across mathematical reasoning and code generation, even in cross-model-family settings. Paper: https://t.co/GfbTUROsEZ Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c