@karpathy

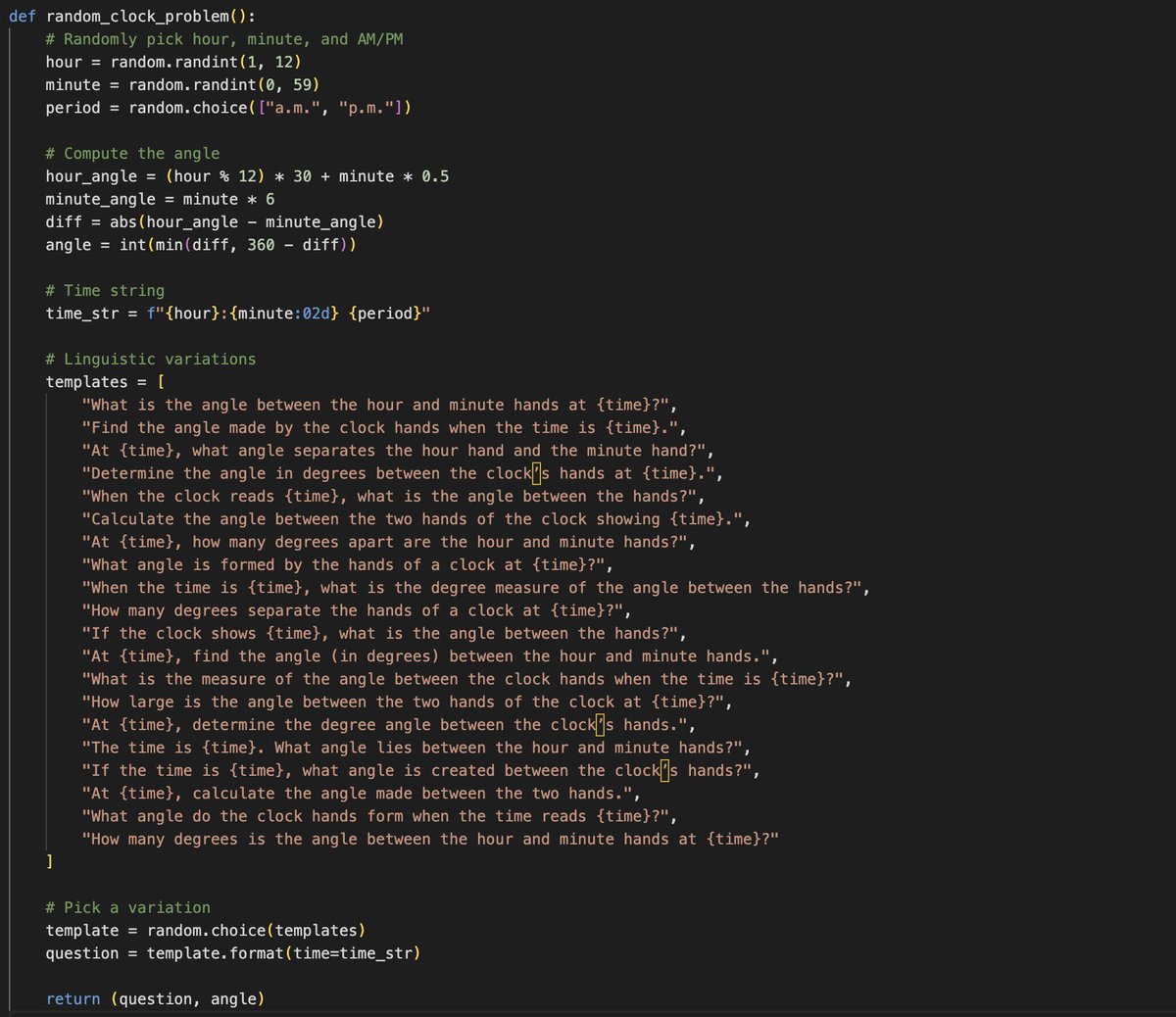

Transforming human knowledge, sensors and actuators from human-first and human-legible to LLM-first and LLM-legible is a beautiful space with so much potential and so much can be done... One example I'm obsessed with recently - for every textbook pdf/epub, there is a perfect "LLMification" of it intended not for human but for an LLM (though it is a non-trivial transformation that would need human in the loop involvement). - All of the exposition is extracted into a markdown document, including all latex, styling (bold/italic), tables, lists, etc. All of the figures are extracted as images. - All worked problems get extracted into SFT examples. Any referenced made to previous figures/tables/etc. are parsed and included. - All practice problems are extracted into environment examples for RL. The correct answers are located in the answer key and attached. Any additional information is added as "answer key" for a potential LLM judge. - Synthetic data expansion. For every specific problem, you can create an infinite problem generator, which emits problems of that type. For example, if a problem is "What is the angle between the hour and minute hands at 9am?" , you can imagine generalizing that to any arbitrary time and calculating answers using Python code, and possibly generating synthetic variations of the prompt text. - All of the data above could be nicely indexed and embedded into a RAG database for later reference, or maybe MCP servers that make it available. Then just as a (human) student could take a high school physics course, an LLM could take it in the exact same way. This would be a significantly richer source of legible, workable information for an LLM than just something like pdf-to-text (current prevailing practice), which simply asks the LLM to predict the textbook content top to bottom token by token (umm - lame). As just a quick and crappy example of synthetic variations of the above example, GPT-5 gave me this problem generator (see image), which can now generalize that problem template to many variations: - When the time is 11:07 a.m., what is the degree measure of the angle between the hands? (Answer: 68) - Determine the angle in degrees between the clock’s hands at 4:14 a.m.. (Answer: 43) - What angle do the clock hands form when the time reads 11:47 a.m.? (Answer: 71) - At 7:02 a.m., what angle separates the hour hand and the minute hand? (Answer: 161) - At 4:14 a.m., calculate the angle made between the two hands. (Answer: 43) - What angle is formed by the hands of a clock at 4:45 p.m.? (Answer: 127) - What is the angle between the hour and minute hands at 8:37 p.m.? (Answer: 36) (infinite practice problems can be created...)