@vllm_project

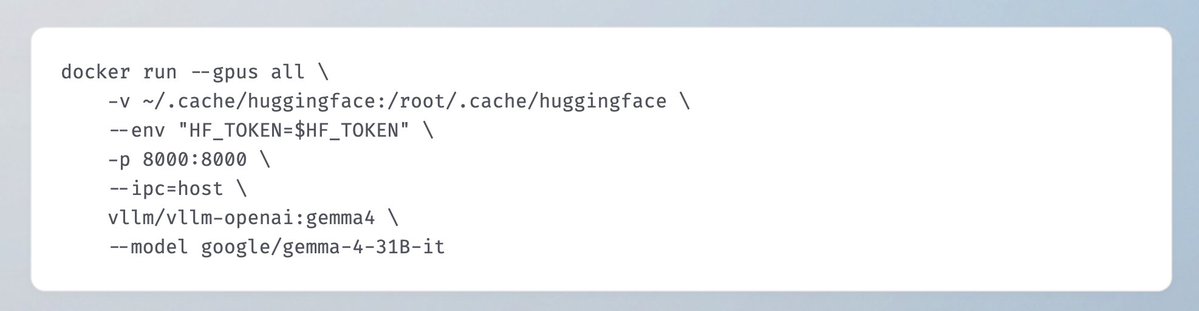

🎉 Gemma 4 is officially available on vLLM! Byte-for-byte, these are the most capable open models for advanced reasoning and agentic workflows. Key features include: - Native Multimodal Support: Full vision and audio capabilities with up to a 256K context window. - Broad Hardware Support from Day 0: Enabled for major GPU architectures and Google TPUs. - Openly Accessible: Now live under an Apache 2.0 license. here's a quick start 👇 - detailed deployment recipe & model release blog coming soon! #Gemma4 #vLLM