@LiorOnAI

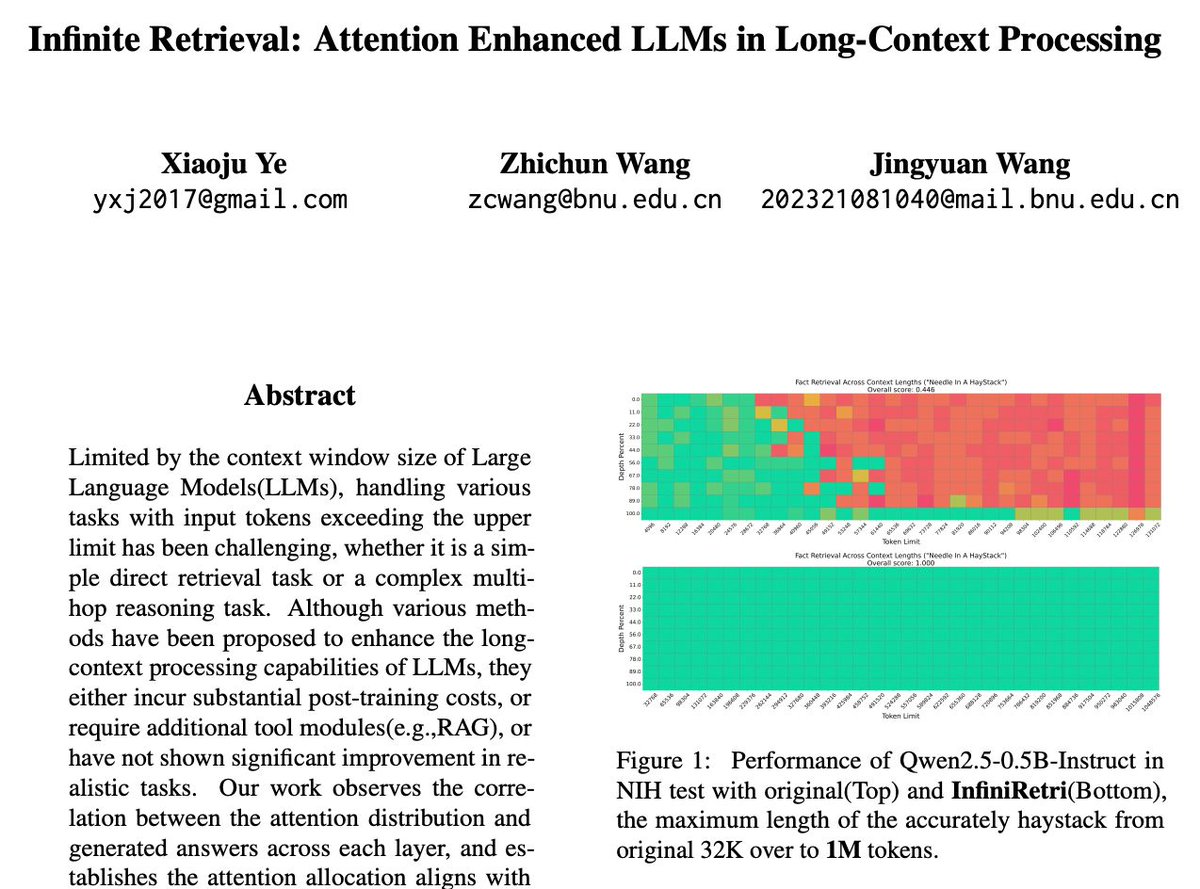

No more needle in a haystack. LLMs now retrieve over 1M tokens—no RAG, no retraining. Infinite Retrieval introduces InfiniRetri, a method that uses transformer attention to handle infinite-length contexts natively. No toolkits. No memory modules. https://t.co/JTxlJweASn