@GT_HaoKang

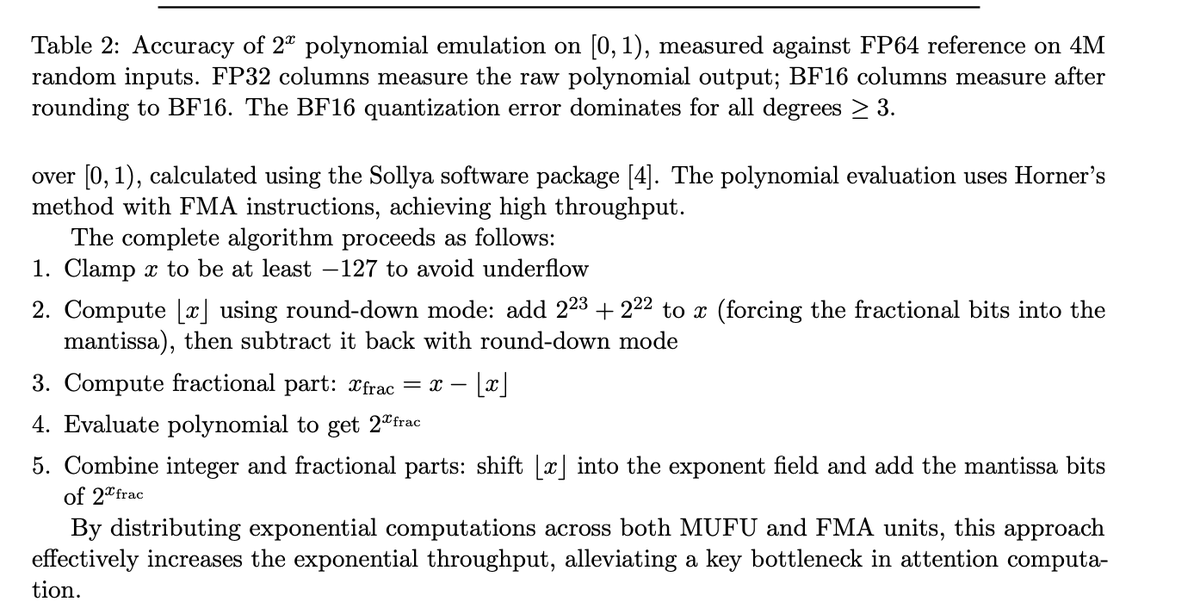

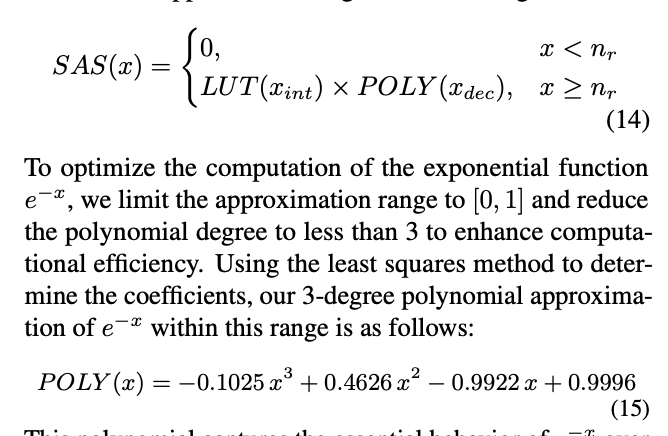

Just found out that flashattention use look up table+linear approximation to accelerate softmax. We have tried similar strategies on work down at MSR two years before on A100. And get over 20% end-to-end speed up of self attention. If you are interested in how to parallel look up table and linear approximation to replace non-linear function. Please take a look at our paper. Paper: https://t.co/ShiHZS6RKz #FlashAttention4 #AI #Mlsys