@teortaxesTex

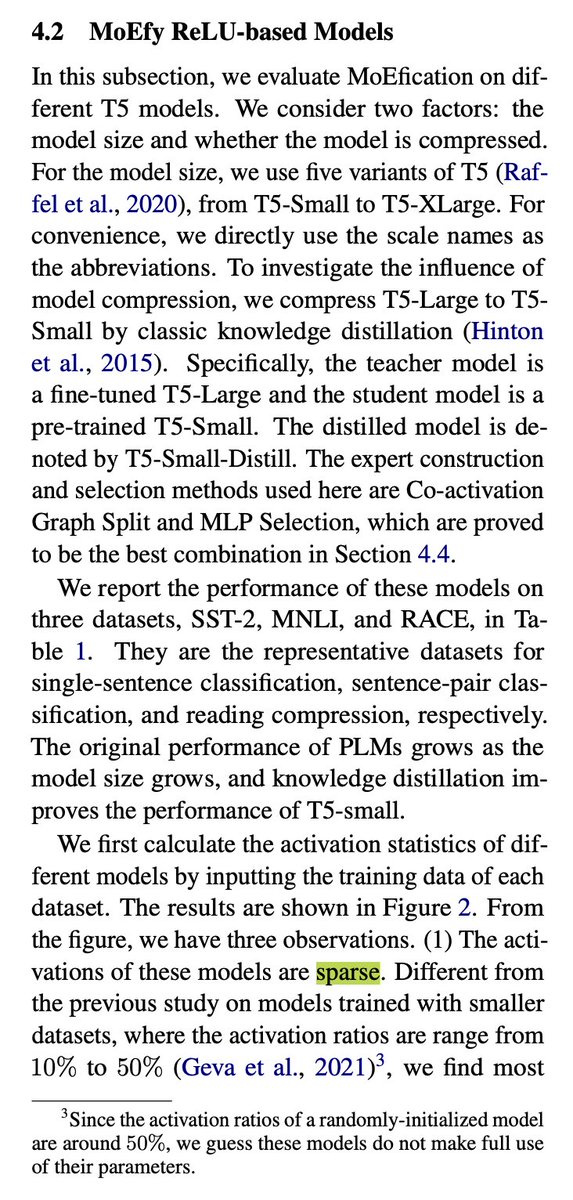

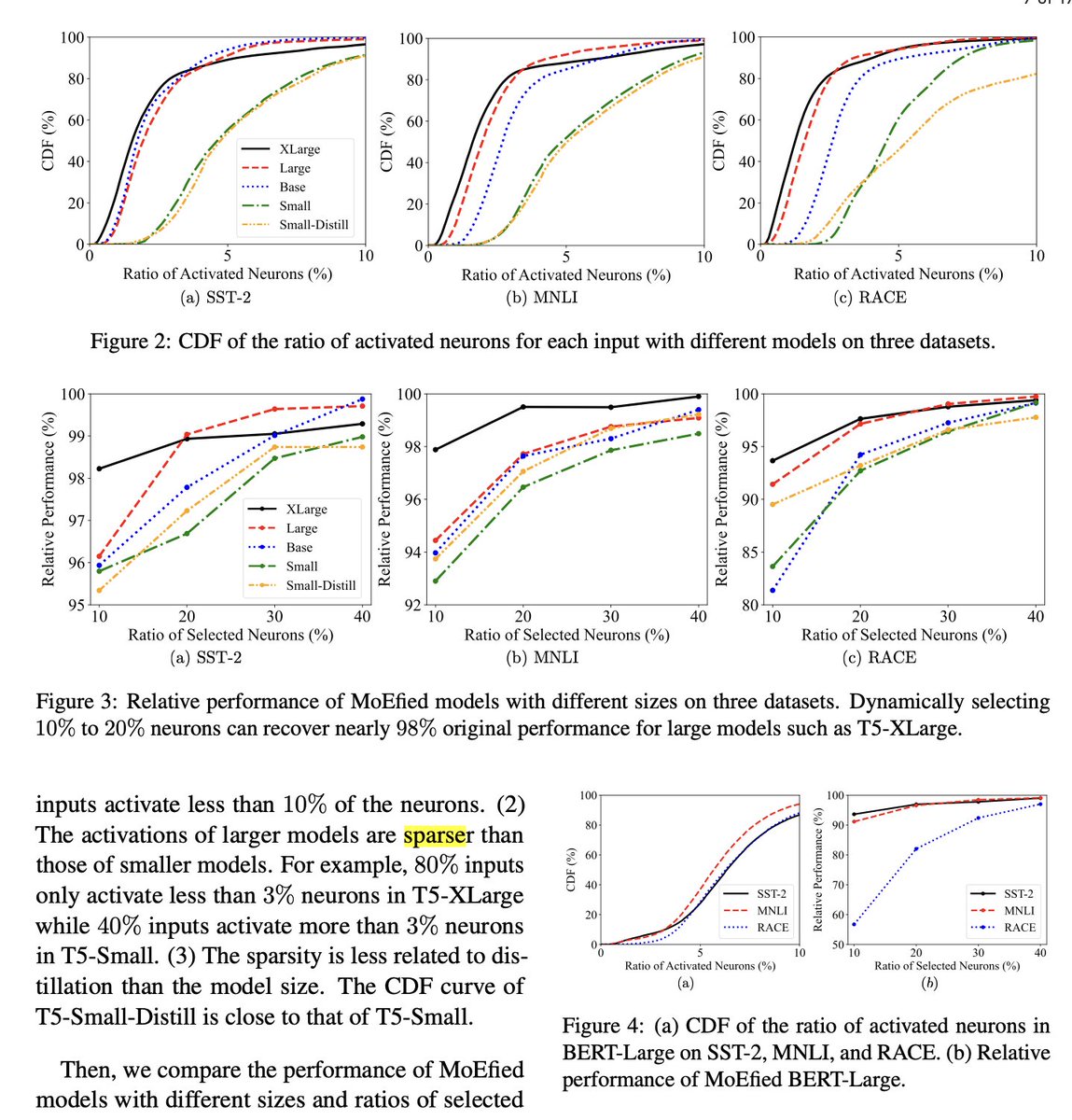

They are hinting at that, sure. But they're testing on OPT, as in most of those Hype-Aware Quantization papers Why? OPT's FF layers use ReLU. It sacrifices perplexity but makes activations sparse. I'm skeptical it'll work for SwiGLU in LLaMA… without retrain. (paper:MoEfication) https://t.co/LbkvP5LW5i