@omarsar0

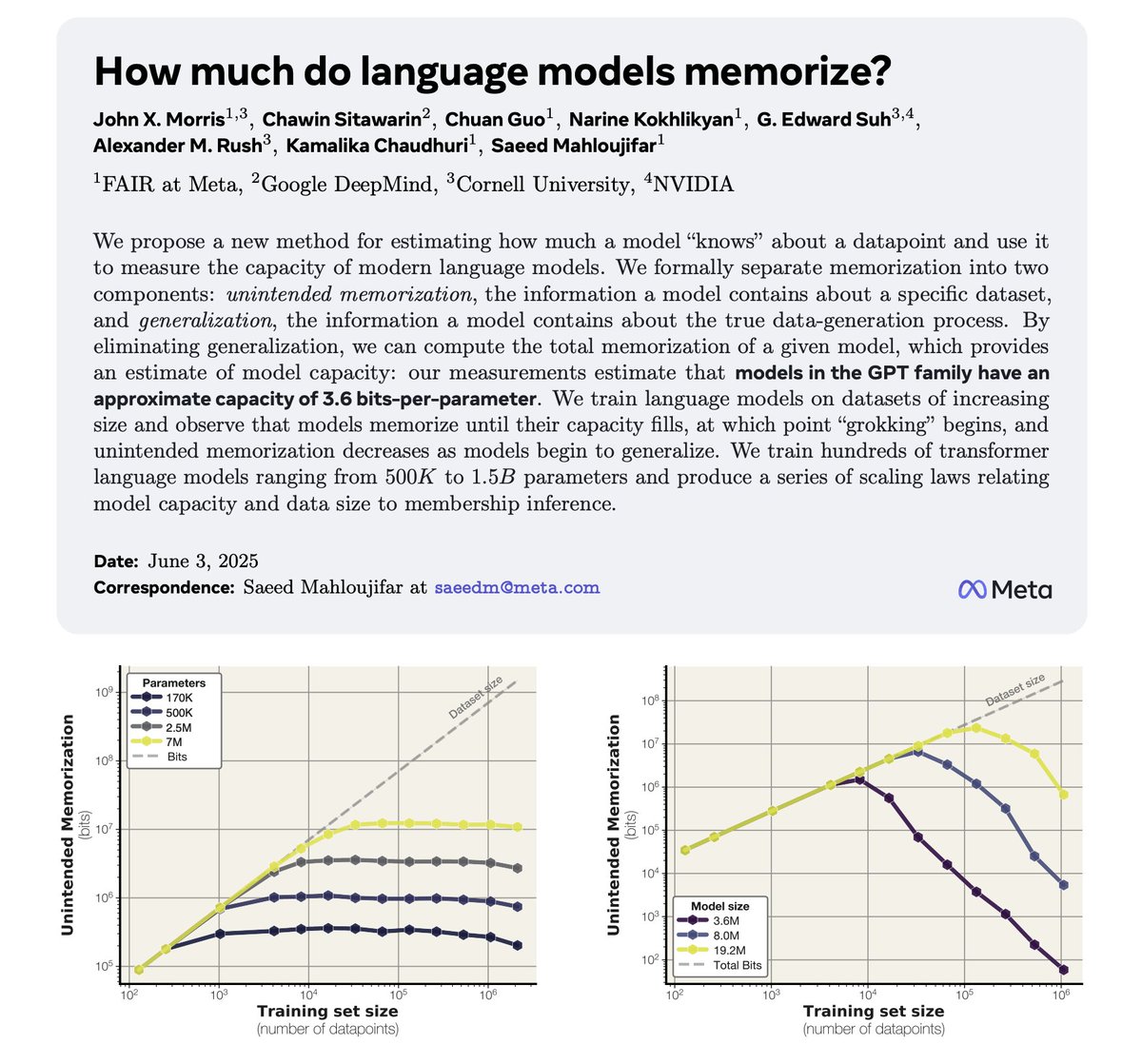

How much do LLMs memorize? Meta and collaborators suggest that they can estimate model capacity by measuring memorization. "Models in the GPT family have an approximate capacity of 3.6 bits-per-parameter." Once capacity fills, generalization begins! More in my notes below: https://t.co/akfNnDqVqW