@rohanpaul_ai

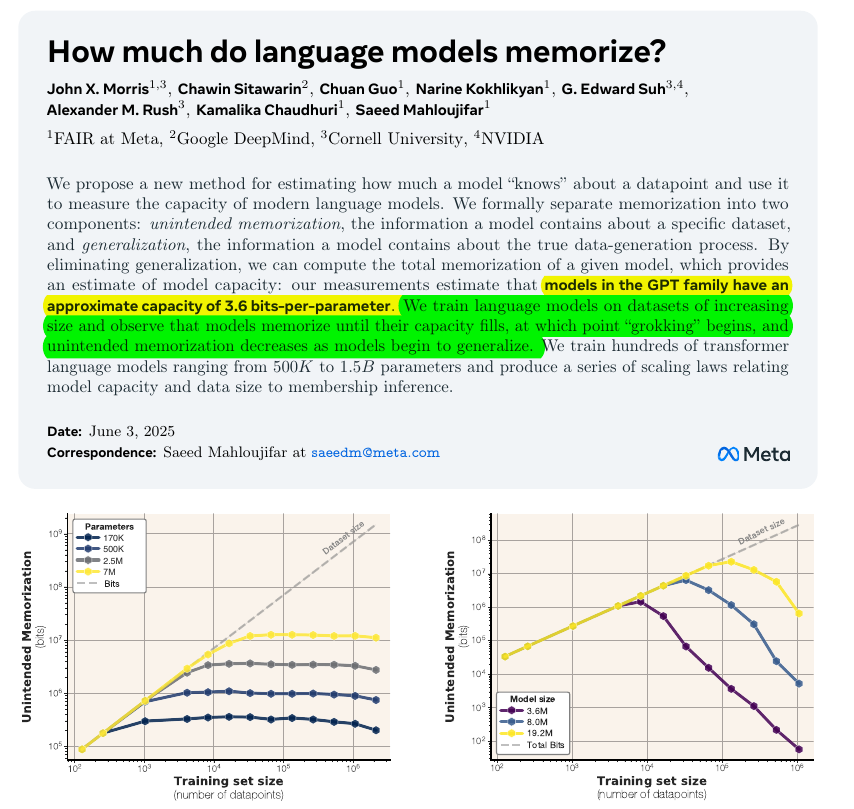

A classic paper, collab between @AIatMeta , @GoogleDeepMind , and @NVIDIAAIDev Language models keep personal facts in a measurable amount of “storage”. This study shows how to count that storage—and when models swap memorization for real learning. 📡 The Question Can we separate a model’s rote recall of training snippets from genuine pattern learning, and measure both in bits? The paper says Yes. Encode the dataset twice: once with the target model, once with a strong reference model that cannot memorize this data. The extra bit-savings the target achieves beyond the reference counts as rote memorization. The shared savings reflect genuine pattern learning. 🧮 The Measurement Trick Treat the model like a compressor. If a data point becomes shorter when the model is present, those saved bits reveal memorization. Subtract the savings that also appear in a strong reference model; the remainder is “unintended” memorization. 📏 What the Numbers Say GPT-style transformers store roughly 3.6 bits per parameter before running out of space. Once full, memorization flattens and test loss starts the familiar “double descent” curve right when dataset information overtakes capacity. 🔄 Why It Matters Loss-based membership inference obeys a clean sigmoid: bigger models or smaller datasets raise attack success; large token-to-parameter ratios push success to random chance. Practitioners can now predict privacy risk from simple size ratios instead of running attacks. Most interesting nugget: a single scaling rule—bits per parameter—explains capacity limits, double descent, and membership-leak risk all in one shot.