@sh_reya

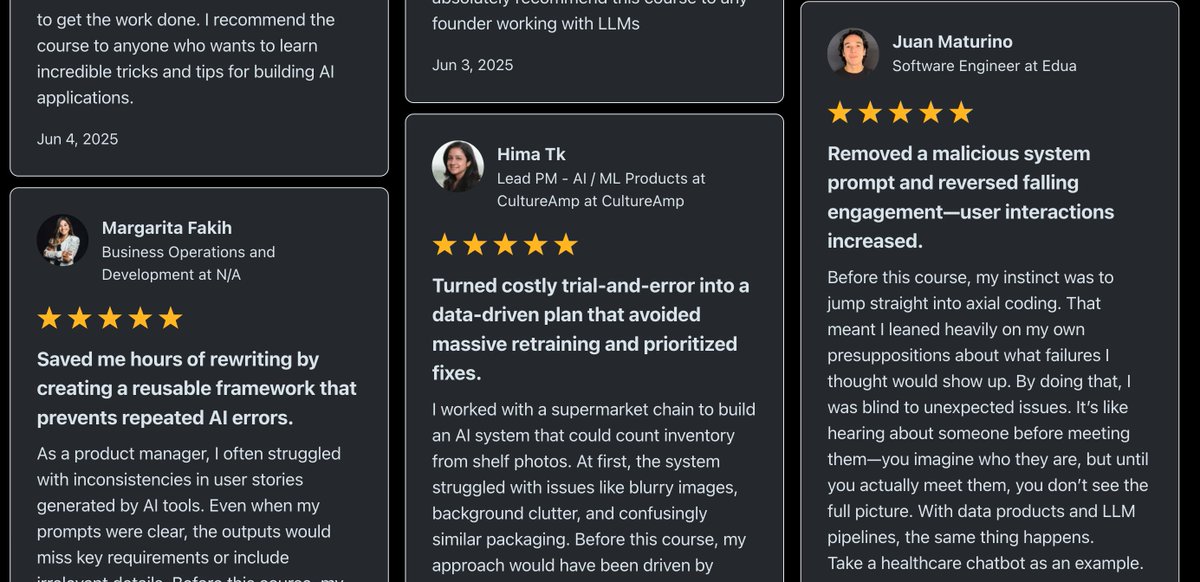

We have done 2 cohorts and ~800 people have meaningfully engaged with the AI evals course. Hamel shared a bunch of testimonials with me yesterday. I was really astounded that they are not just generic testimonials; people mentioned very specific results and concepts. It seems like people have been really unblocked and are able to get a lot further in their AI projects, legitimately. E.g., "I often ran into code quality issues when using AI assistants, but I didn’t have a structured way to make sense of them. Before this course, I would just label outputs as “messy code” without really digging into the underlying problems....After this course, I now analyze them systematically across dimensions—things like hardcoded tests, long methods, poor formatting, bad naming, poor architecture choices, duplication, dead code, or ignoring available quality tools." - SWE "The axial coding just hit different. Before this course, my approach to failures was more of a “vibe investigation,” poking around without a clear structure.After this course, I now cluster failures systematically and trace them back to their core issues... I finally feel like I have a proper way to identify the root problems in my agent instead of just guessing." - Staff SWE "Some of my favorite highlights: - Build a custom data annotation app! I was so intimidated by this, but I finally made the leap and vibe-coded something out in an afternoon. It has 10x'd my ability to review conversations." - Senior PM "It's really hard to build LLM judges so be really thoughtful about what you build them for. Often, the biggest impact comes from talking disagreements out and figuring out why there is a disagreement in the first place: are your goals unclear? This seemingly technical course has made me a better PM." - Senior PM "I have been following Hamel's and Shreya's work for quite some time and it was really awesome to learn from them all the concepts of error analysis, measurement best practices, LLM as Judge + how to make sure it is reliable with human evaluations, collaborative analysis of errors, evaluation of multiturn chats, creation of datasets for CI/CD etc. The last topic on accuracy and cost optimization is really useful as we are seeing in our applications when scaling." - Director of ML "I was making the common mistake of jumping straight to LLM-as-a-judge without first figuring out what I actually needed to measure. The sections on calibrating LLM judges with TPR/TNR methods were exactly the technical content I was looking for." - ML Engineer "We kept testing judge models on the same examples we used to build them; obviously they looked great until they hit production." - ML Engineer "The cost optimization stuff alone could pay for the course (they showed examples of 60%+ savings)" - ML Engineer