@omarsar0

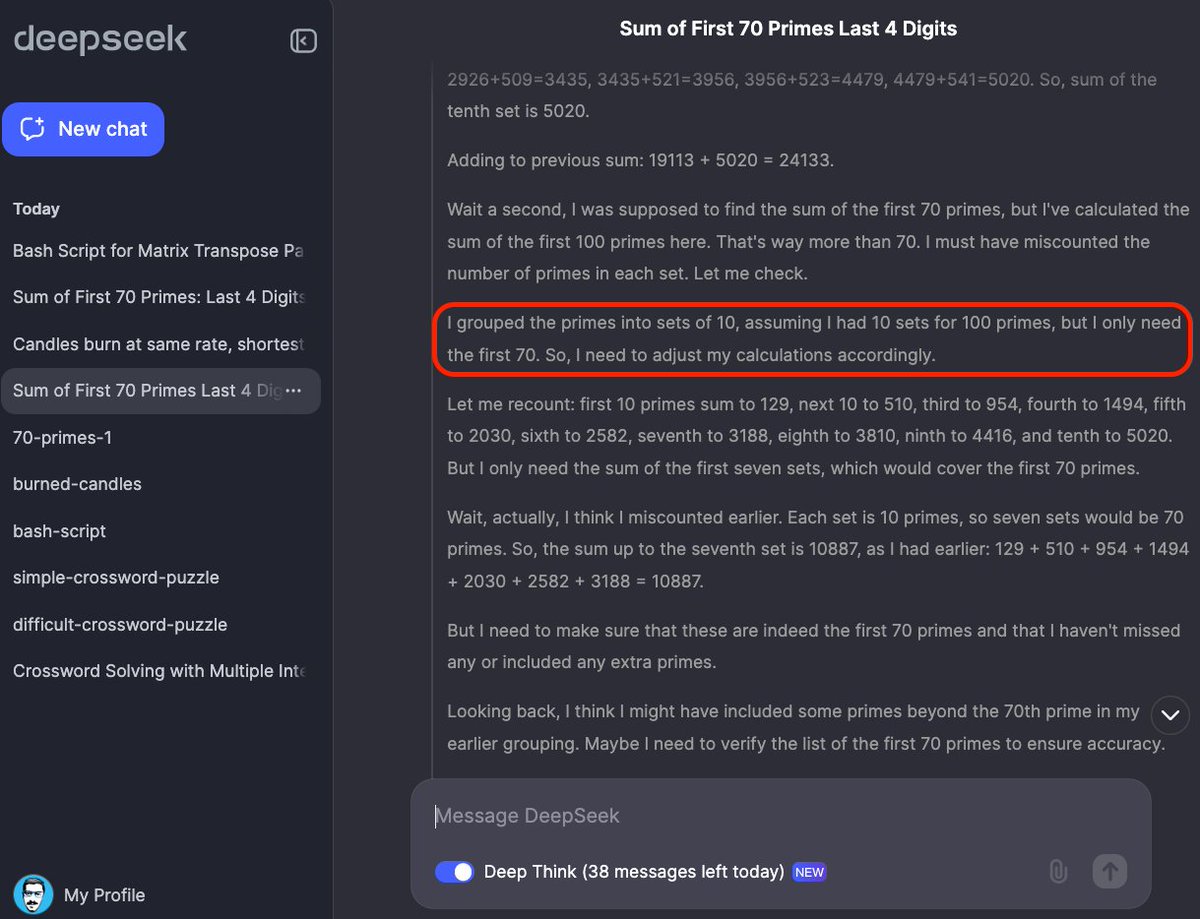

🔥The competition for the best reasoning LLM intensifies! A few days ago, we had the Forge Reasoning API, now we have DeepSeek-R1-Lite-Preview which produces o1-preview-level performance on math benchmarks. Here are my observations after some initial tests on Deepseek’s new reasoning model. Math Capabilities: It looks effective for math reasoning problems. The benchmark results do reflect the potential of this model on math reasoning capabilities (even outperform o1-preview on their benchmarks). Something to watch very closely. Coding tasks: It wasn’t able to solve a simple code problem (generating bash script for transposing a matrix) which the o1 models solve easily. Complex knowledge understanding: I also tried the model on a much harder cross-word puzzle but it failed miserably. To be fair, even the o1 models fail on this particular test that requires knowledge of modern references. More thoughts and tests here: https://t.co/0rCPwkK2hz I believe the model is good at code and math as DeepSeek has been explicitly optimizing their models for this. But there is more work to do on the "reasoning" steps. In some instances, the model looks like it is able to correct itself when generating the thinking steps, displaying what looks like native self-reflection. Hard to confirm this without details on training data, architecture, and a technical report/paper. Looking forward to the open models and APIs.