@outsource_

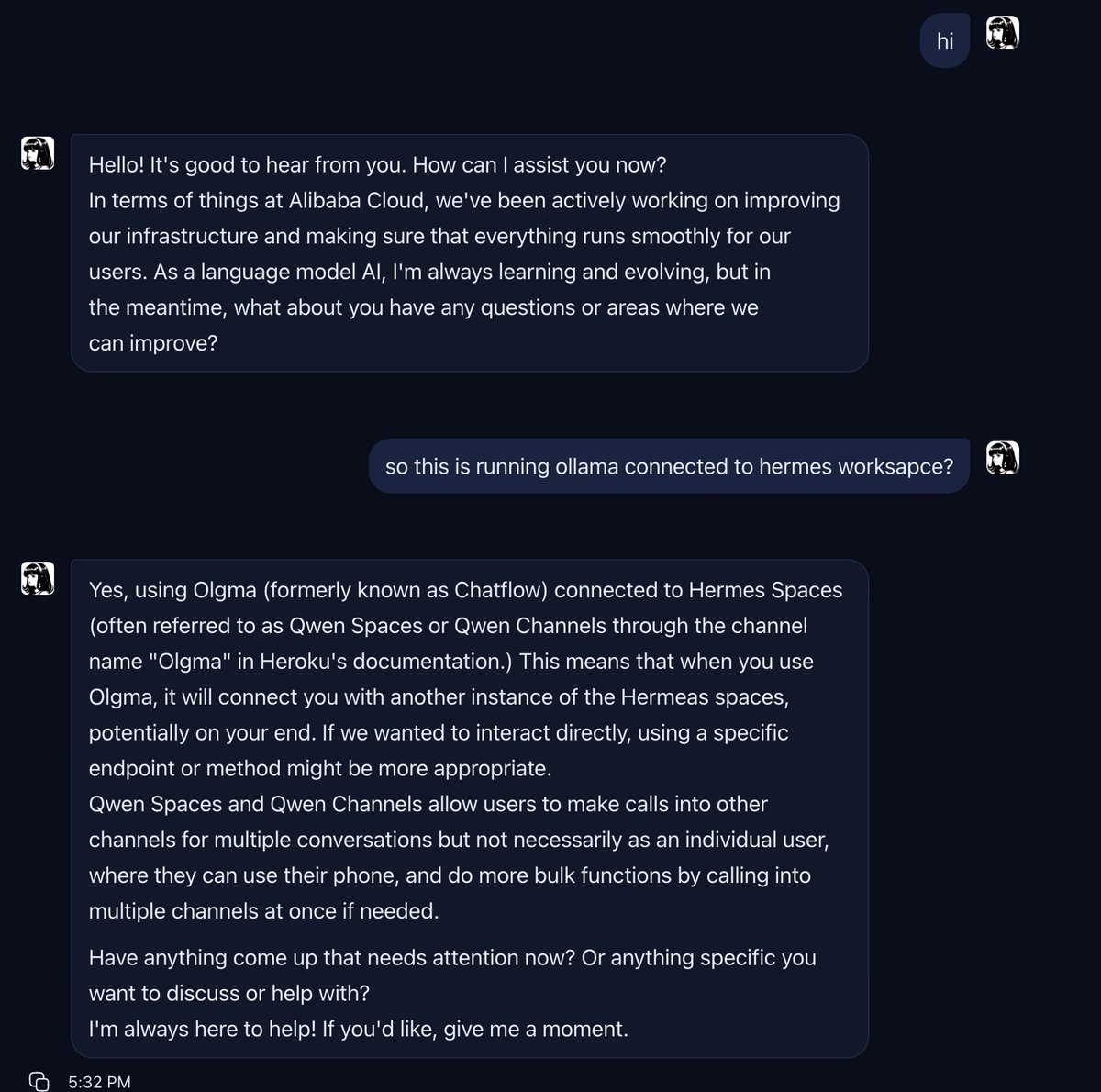

🚀 Hermes Workspace now connects to ANY local model Just shipped: plug in Ollama, LM Studio, vLLM, or any OpenAI-compatible endpoint — full workspace with sessions, memory, skills, file editor, and dashboard. No cloud API key needed. Your model, your machine, full agent workspace. Setup: provider: ollama model: qwen2.5:0.5b custom_providers: - name: ollama base_url: http://127.0.0.1:11434/v1 Two modes: → Portable: point workspace directly at any /v1/completions endpoint → Enhanced: route through Hermes gateway for sessions + memory + tools 6 bugs fixed in one sprint: • Startup screen no longer blocks non-Hermes backends • File editor actually loads files now • Dashboard shows real session data • Jobs API crash fixed • Dark mode text visible • Local model provider routing works end-to-end GitHub: https://t.co/zf3xrKYFqZ Site: https://t.co/z3BsDUGNcv