@iScienceLuvr

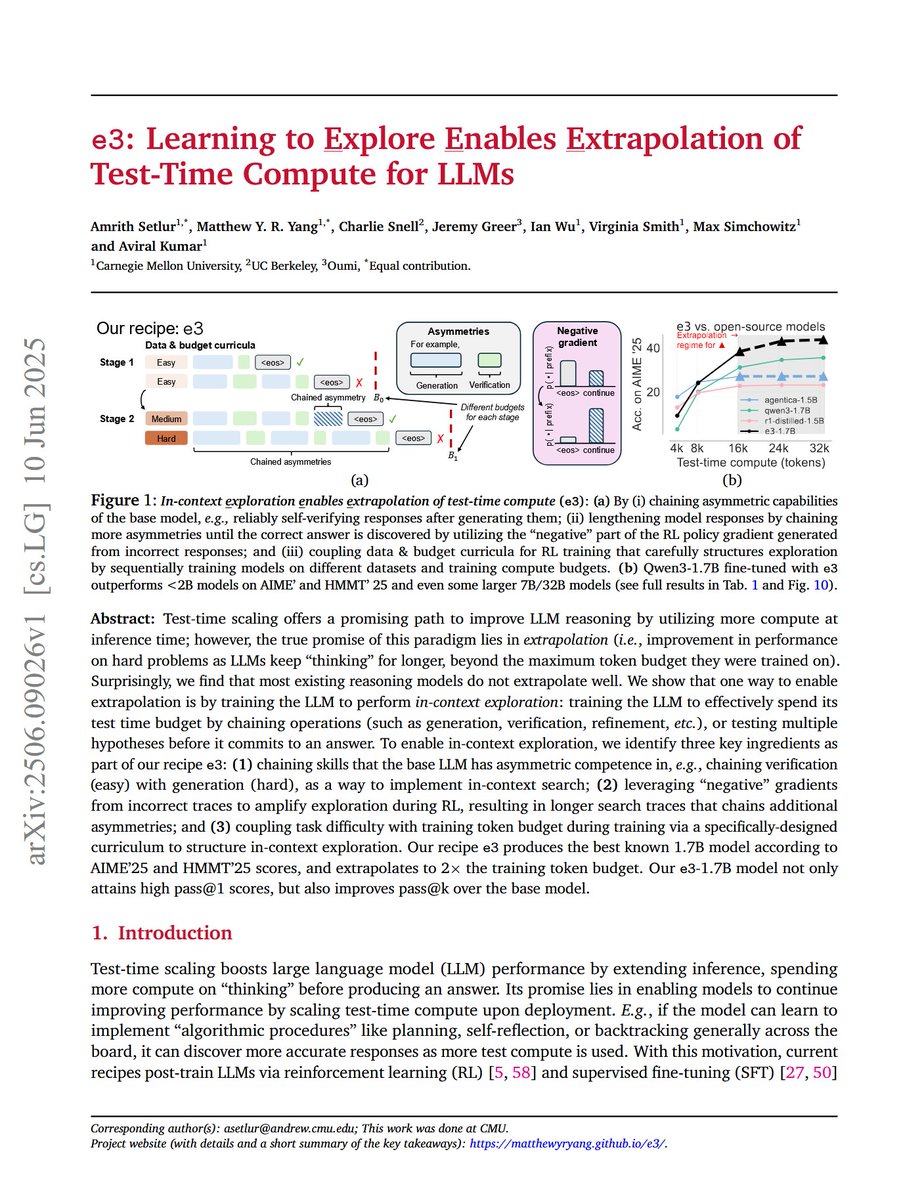

e3: Learning to Explore Enables Extrapolation of Test-Time Compute for LLMs "Our recipe e3 produces the best known 1.7B model according to AIME'25 and HMMT'25 scores, and extrapolates to 2x the training token budget." "Surprisingly, we find that most existing reasoning models… https://t.co/63BYLjkBJo