@omarsar0

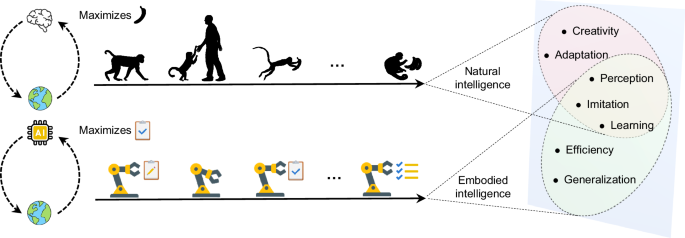

Designing reward functions for RL agents is kind of broken. The default approach remains manual engineering: domain experts iteratively craft reward signals through trial-and-error. This requires significant expertise, takes enormous human effort, and often fails when task complexity increases. But what if agents could discover their own optimal reward functions? This new research introduces a bilevel optimization framework that automatically discovers optimal reward functions for embodied RL agents through regret minimization. The optimal reward function can be defined as one that minimizes the gap between the learned policy and the true optimal policy. No expert demonstrations needed. No human feedback required. How it works: Two optimization levels run simultaneously. The lower level trains the RL agent to maximize rewards as usual. The upper level continuously updates the reward function itself, guided by a meta-gradient that minimizes policy regret. The reward function learns to assign high values to critical states like success or failure, while providing dense feedback throughout the state space. The framework works across both value-based agents (DQN) and policy-based agents (PPO, SAC, TD3) without task-specific tuning. In data center energy management, all RL agents using discovered rewards achieved energy reductions exceeding 60%, compared to 21-52% for baseline RL. In UAV trajectory tracking, the approach enabled PPO agents to successfully complete tasks where hand-designed rewards failed entirely. In sparse-reward OpenAI benchmarks, agents using discovered rewards outperformed baselines in both convergence speed and final performance. The discovered reward functions also reveal interpretable structure: they automatically identify critical states and encode latent relationships between states and rewards that match physics-based reward designs, despite having no explicit mathematical model. Paper: https://t.co/W9fRH6sbDq Learn to build effective AI Agents in our academy: https://t.co/JBU5beIoD0