@iScienceLuvr

Copy Suppression: Comprehensively Understanding an Attention Head website: https://t.co/szSr7L6KZ1 abs: https://t.co/yD459BioOs "To the best of our knowledge, this is the most comprehensive description of the complete role of a component in a language model to date." https://t.co/fQUUvc2ypp

copy-suppression.streamlit.app

The content requires JavaScript to function, indicating it's an interactive application....

• JavaScript is necessary to run the app.

• The content is not accessible without enabling JavaScript.

arXiv

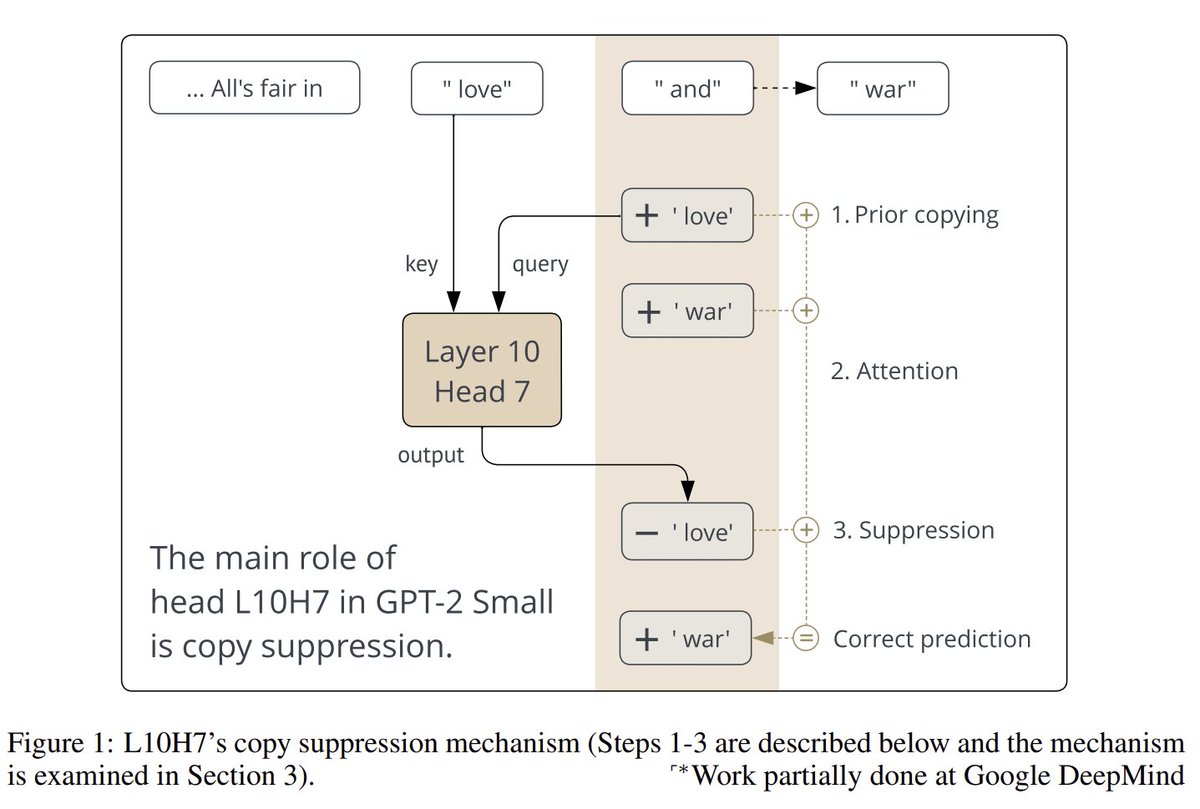

Copy Suppression: Comprehensively Understanding an Attention Head

This article explores the role of an attention head in GPT-2 Small that suppresses naive copying behavior, enhancing model calibration and self-repair...

• Introduces copy suppression in GPT-2 Small.

• Explains self-repair mechanisms in language models.