@seb_ruder

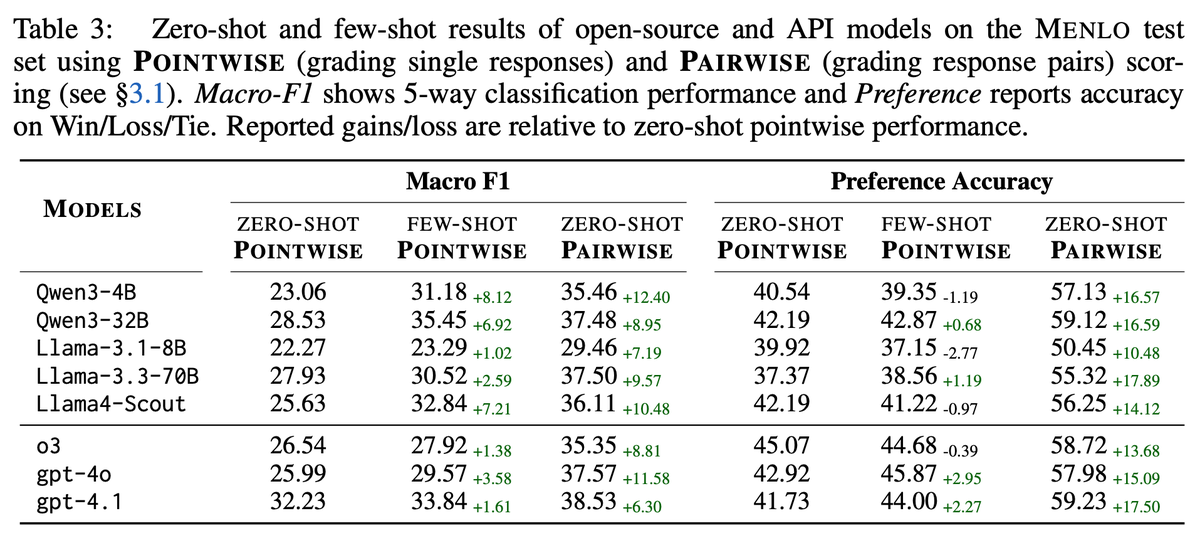

We benchmark: 1. Zero-shot LLM judges 2. RL- & SFT-trained reward models 3. Human raters (gold) Findings: – Pairwise + rubric-based eval boosts zero-shot LLM judge performance – But: gap with humans remains across languages https://t.co/UfSuaq2xdZ