@LucSGeorges

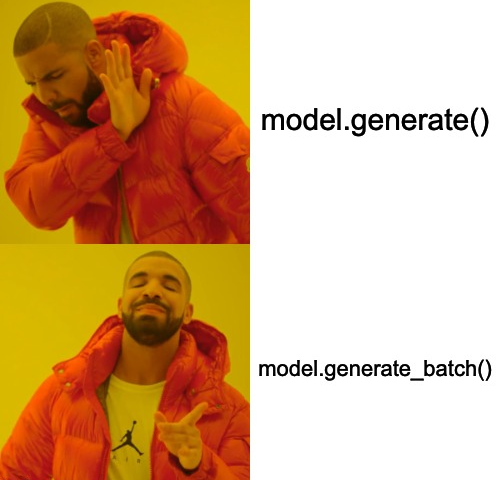

we've been pushing commits to transformers discretely, time to talk about we've been cooking the last few months: ⚡️ Continuous Batching is in transformers ⚡️ this will simplify, most notably, evaluation and your training loop: no need for extra dependencies or infra to get fast inference, and no need for convoluted code to update your weights note that speed is currently not on par with the best inference frameworks and servers out there and probably never will be the goal is *not* to become as fast: we want to complement the existing landscape with features like these, aiming for transformers to be the toolbox for tinkering with and building models