@remi_or_

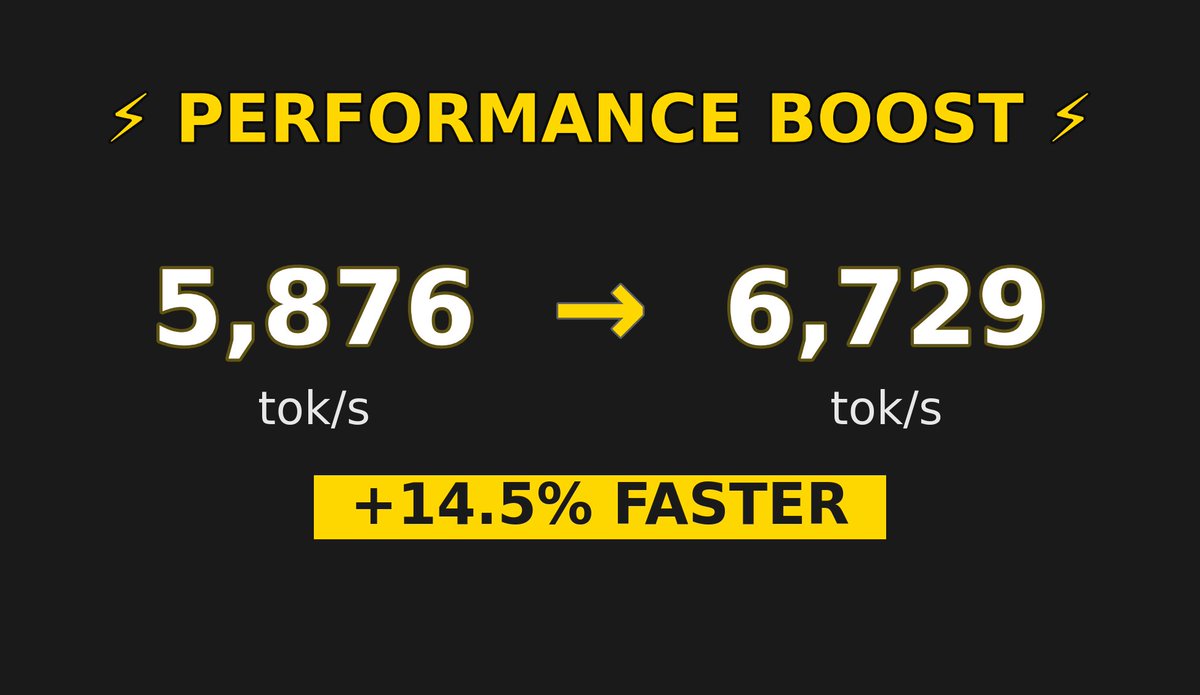

Just opened a PR to make continuous batching in transformers go EVEN faster🚆 With simple optimizations like no torch sync and more GPU-sided operations, we gained 10-14.5% throughput across 500 requests🥳 Soon, there will be native fast RL training in transformers. Keep up 😉 https://t.co/EoaEvhqS3C