@_akhaliq

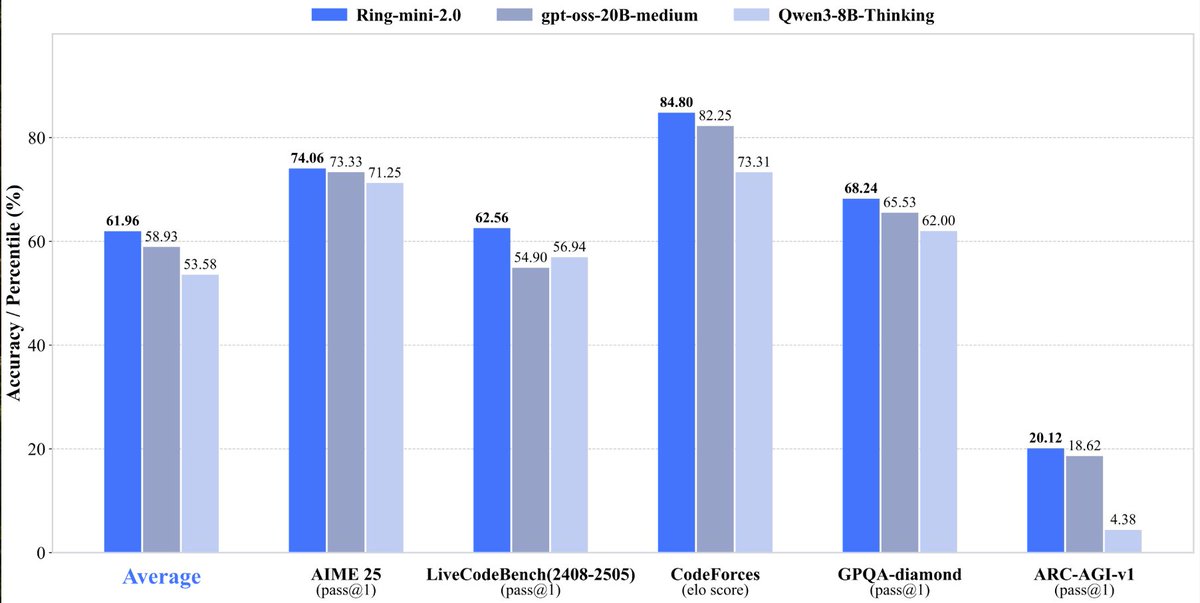

Ring-mini-2.0 With only 16B total parameters and 1.4B activated parameters, it achieves comprehensive reasoning capabilities comparable to dense models below the 10B scale On multiple benchmarks (LiveCodeBench, AIME 2025, GPQA, ARC-AGI-v1, etc.), outperforms dense models below 10B and even rivals larger MoE models (e.g., gpt-oss-20B-medium) with comparable output lengths, particularly excelling in logical reasoning vibe coded a quick chat app in anycoder