@arankomatsuzaki

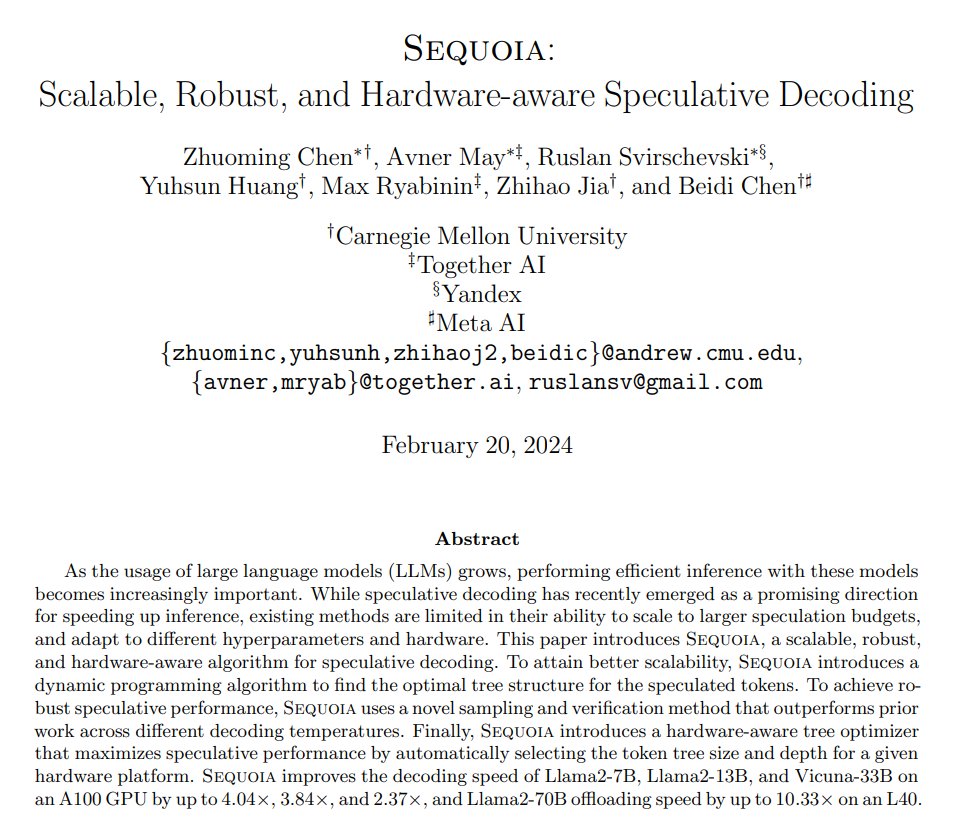

Sequoia: Scalable, Robust, and Hardware-aware Speculative Decoding Improves the decoding speed of Vicuna-33B by up to 2.37x and Llama2-70B offloading speed by up to 10.33x https://t.co/4Tws6hP3E2 https://t.co/W2HwiIsX8e

Viewing enriched Twitter post

Sequoia: Scalable, Robust, and Hardware-aware Speculative Decoding Improves the decoding speed of Vicuna-33B by up to 2.37x and Llama2-70B offloading speed by up to 10.33x https://t.co/4Tws6hP3E2 https://t.co/W2HwiIsX8e

{

"data": [

{

"id": "",

"type": "photo",

"url": null,

"media_url": "https://pbs.twimg.com/media/GGv80D2XYAAaJvI.jpg",

"media_url_https": null,

"display_url": null,

"expanded_url": null

}

],

"score": 0.934,

"scored_at": "2025-08-09T13:46:07.552137",

"import_source": "manual_curation_2024",

"links_checked": true,

"checked_at": "2025-08-10T10:31:58.771663",

"media": [

{

"type": "photo",

"url": "https://crmoxkoizveukayfjuyo.supabase.co/storage/v1/object/public/media/posts/1759778428801187871/media_0.jpg?",

"filename": "media_0.jpg",

"original_url": "https://pbs.twimg.com/media/GGv80D2XYAAaJvI.jpg"

}

],

"storage_migrated": true

}{

"user": {

"created_at": "2016-11-04T06:57:37.000Z",

"default_profile_image": false,

"description": "ML research & startup with @EnricoShippole",

"fast_followers_count": 0,

"favourites_count": 9848,

"followers_count": 89404,

"friends_count": 78,

"has_custom_timelines": true,

"is_translator": false,

"listed_count": 1193,

"location": "",

"media_count": 1831,

"name": "Aran Komatsuzaki",

"normal_followers_count": 89404,

"possibly_sensitive": false,

"profile_image_url_https": "https://pbs.twimg.com/profile_images/1561220982328754176/JOYS5kab_normal.jpg",

"screen_name": "arankomatsuzaki",

"statuses_count": 4455,

"translator_type": "none",

"url": "https://t.co/aZGCShnLYq",

"verified": true,

"withheld_in_countries": [],

"id_str": "794433401591693312"

},

"id": "1759778428801187871",

"conversation_id": "1759778428801187871",

"full_text": "Sequoia: Scalable, Robust, and Hardware-aware Speculative Decoding\n\nImproves the decoding speed of Vicuna-33B by up to 2.37x and Llama2-70B offloading speed by up to 10.33x\n\nhttps://t.co/4Tws6hP3E2 https://t.co/W2HwiIsX8e",

"reply_count": 3,

"retweet_count": 21,

"favorite_count": 86,

"hashtags": [],

"symbols": [],

"user_mentions": [],

"urls": [

{

"url": "https://t.co/4Tws6hP3E2",

"expanded_url": "https://arxiv.org/abs/2402.12374",

"display_url": "arxiv.org/abs/2402.12374"

}

],

"media": [

{

"media_url": "https://pbs.twimg.com/media/GGv80D2XYAAaJvI.jpg",

"type": "photo"

}

],

"url": "https://twitter.com/arankomatsuzaki/status/1759778428801187871",

"created_at": "2024-02-20T03:14:07.000Z",

"#sort_index": "1759778428801187871",

"view_count": 10309,

"quote_count": 3,

"is_quote_tweet": false,

"is_retweet": false,

"is_pinned": false,

"is_truncated": false,

"startUrl": "https://twitter.com/arankomatsuzaki/status/1759778428801187871"

}