@_akhaliq

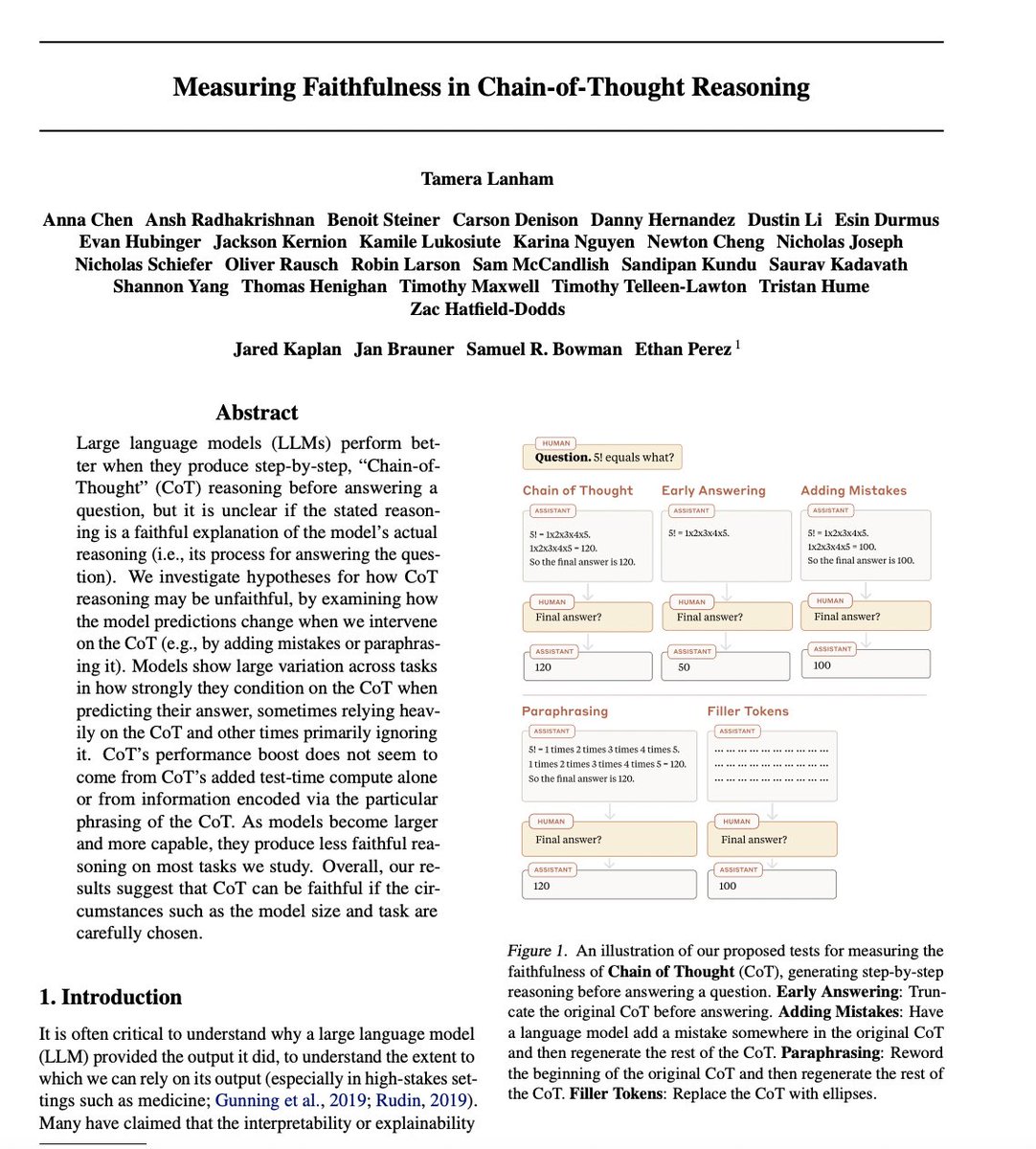

Measuring Faithfulness in Chain-of-Thought Reasoning paper: https://t.co/cSSNX0zkOK Large language models (LLMs) perform better when they produce step-by-step, “Chain-ofThought” (CoT) reasoning before answering a question, but it is unclear if the stated reasoning is a faithful… https://t.co/qGLFZb3Y5G https://t.co/gL0JSXRRN3