@femke_plantinga

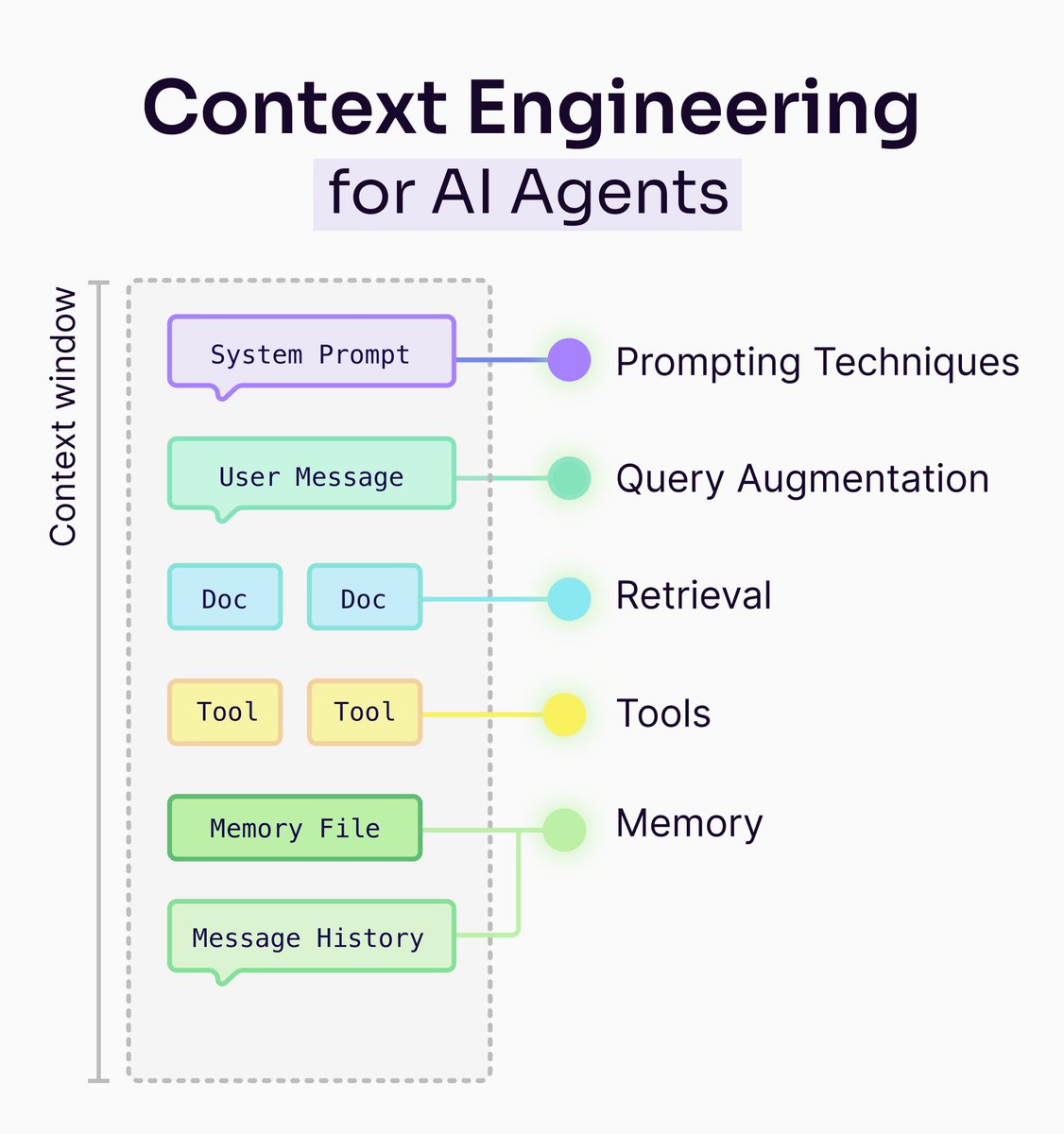

One month ago, we released a 41-page ebook on context engineering. Turns out, we had way more to say. Our new blog post dives deeper into the discipline of treating the context window as a scarce resource and designing everything around it (retrieval, memory, tools, prompts) so your LLM spends its limited attention budget only on high-signal tokens. 𝗧𝗵𝗲 𝗦𝗶𝘅 𝗣𝗶𝗹𝗹𝗮𝗿𝘀 𝗼𝗳 𝗖𝗼𝗻𝘁𝗲𝘅𝘁 𝗘𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝗶𝗻𝗴: 1️⃣ 𝗔𝗴𝗲𝗻𝘁𝘀: Orchestrate decisions and manage information flow dynamically 2️⃣ 𝗤𝘂𝗲𝗿𝘆 𝗔𝘂𝗴𝗺𝗲𝗻𝘁𝗮𝘁𝗶𝗼𝗻: Refine user input for different downstream tasks 3️⃣ 𝗥𝗲𝘁𝗿𝗶𝗲𝘃𝗮𝗹: Optimize chunking strategies to balance precision and context 4️⃣ 𝗣𝗿𝗼𝗺𝗽𝘁𝗶𝗻𝗴 𝗧𝗲𝗰𝗵𝗻𝗶𝗾𝘂𝗲𝘀: Guide the model on how to use retrieved information 5️⃣ 𝗠𝗲𝗺𝗼𝗿𝘆: Design layered systems (short-term, long-term, working) that don't pollute context 6️⃣ 𝗧𝗼𝗼𝗹𝘀: Enable real-world action through the Thought-Action-Observation cycle The blog includes a complete walkthrough of building a real-world agent with 𝗘𝗹𝘆𝘀𝗶𝗮, our open source agentic RAG framework. You'll see how built-in tools (query, aggregate, cited_summarize) and custom tools work together in a decision-tree architecture with global context awareness. Read the full blog post here: https://t.co/uuDcf3o0K2