@JohnNosta

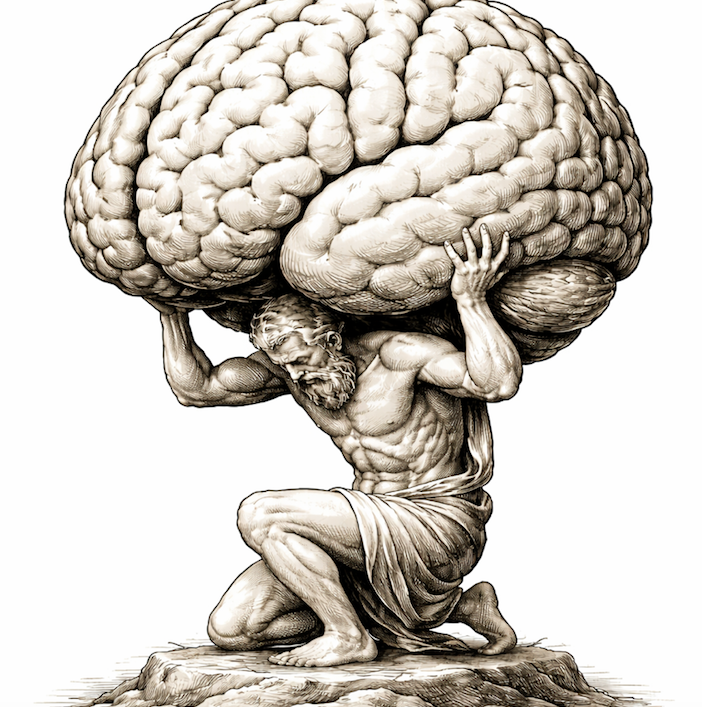

🧠The Burden of Intelligence 👉Why human judgment still matters, even when AI thinks better than we do. For most of human history, intelligence was scarce. Thinking took time, insight arrived slowly and it was shaped by lived experience. Cognition had friction, and this friction gave it substance and weight. Today, that assumption is collapsing. Artificial intelligence has, to use another ubiquitous word, made cognition precariously abundant. Answers arrive instantly and patterns surface with little to no effort. Judgment is technologically packaged and delivered with a confidence that increasingly rivals, if not often exceeds our own. My central point here is that this isn't simply another technological advance but marks the first time human cognition itself appears to be on the obsolescence curve. We have replaced tools before, but we have never replaced thinking. That's why this moment feels different to me. AI isn't extending human effort in the way machines once extended muscle or speed. It's occupying territory we once assumed was uniquely human that includes reasoning, synthesis, interpretation. In many domains, AI often performs these functions better than we do. And this is a claim many resist, because it contradicts how we have always understood progress—generally slow, incremental, and occasionally punctuated by sudden change. Blink, it's different now. AI is already outperforming humans at just about everything from diagnosis to creativity. These are no longer edge cases or lab demonstrations but operational realities inculcated into our lives. Even ethics, long treated as safely human, has proven more codifiable than we expected. Moral constraints can be written down, trade-offs can be formalized and even prohibitions can be managed at scale. What once felt irreducibly human increasingly fits inside AI systems. Maybe the curious discovery here isn't that machines lack morality, but that much of what we called moral reasoning was more procedural than we care to admit. So the question is no longer whether AI will become cognitively superior. In many ways, it already is. The deeper question is what happens when intelligence itself changes categories. When intelligence becomes abundant, its value shifts. What becomes scarce isn't cognition, but ownership. The fundamental shift here is from insight to accountability. Simply put, it's not answers, but responsibility for what those answers set in motion. This is the burden of intelligence. As AI grows more capable, my sense is that the cost of disengagement rises. A flawed recommendation from a powerful system carries far more consequence than one from a limited or optional tool. And in this context, delegation becomes most tempting precisely where it is most dangerous. The smarter the AI, the easier it is to step back, and in doing so, the higher the price. article continues after advertisement Here's the key fact: human intelligence is no longer defined by producing better answers. It's defined by bearing the consequences of answers we did not fully generate, and, dare I say, often do not fully understand. Many of us already feel this shift, even if we do not name it. The quiet discomfort of endorsing a recommendation we did not reason through. The unease of defending a conclusion that feels right but arrived from somewhere else. This is the moment when AI's output is both smart and difficult to challenge and it's also the moment that responsibility lands squarely on us. That discomfort is not failure. It is the sensation of intelligence without authorship. This burden isn't some sort of techno-consolation prize. It's much more and perhaps more challenging. It demands "cognitive presence" in systems designed to make human presence feel unnecessary. It asks us to remain accountable at the exact moment automation invites surrender. This may push us toward the margins of decision-making, and those margins are not trivial. That's the spot where values collide and harm accumulates and the very fringes where human engagement becomes less an option and more an imperative. The real risk of AI is not that machines will think better than us. It's that thinking will begin to feel complete without us. If human cognition is being diminished, the response cannot be an afterthought or even nostalgia. It must be vigilance realized as the sustained, effortful refusal to disengage simply because intelligence no longer requires our participation. Vigilance isn't watching from the sidelines but remaining cognitively present inside technologies that function perfectly well without us. The future of intelligence is not a contest between minds. It is a test of whether humans are willing to remain answerable in a world where intelligence no longer needs them to function. The burden of intelligence isn't simply about preserving human primacy but about refusing to abandon responsibility simply because thinking has become easy. And that burden will not disappear, no matter how intelligent our machines become. https://t.co/qXFgDxPE0O #AI #intelligence #cognition