@mervenoyann

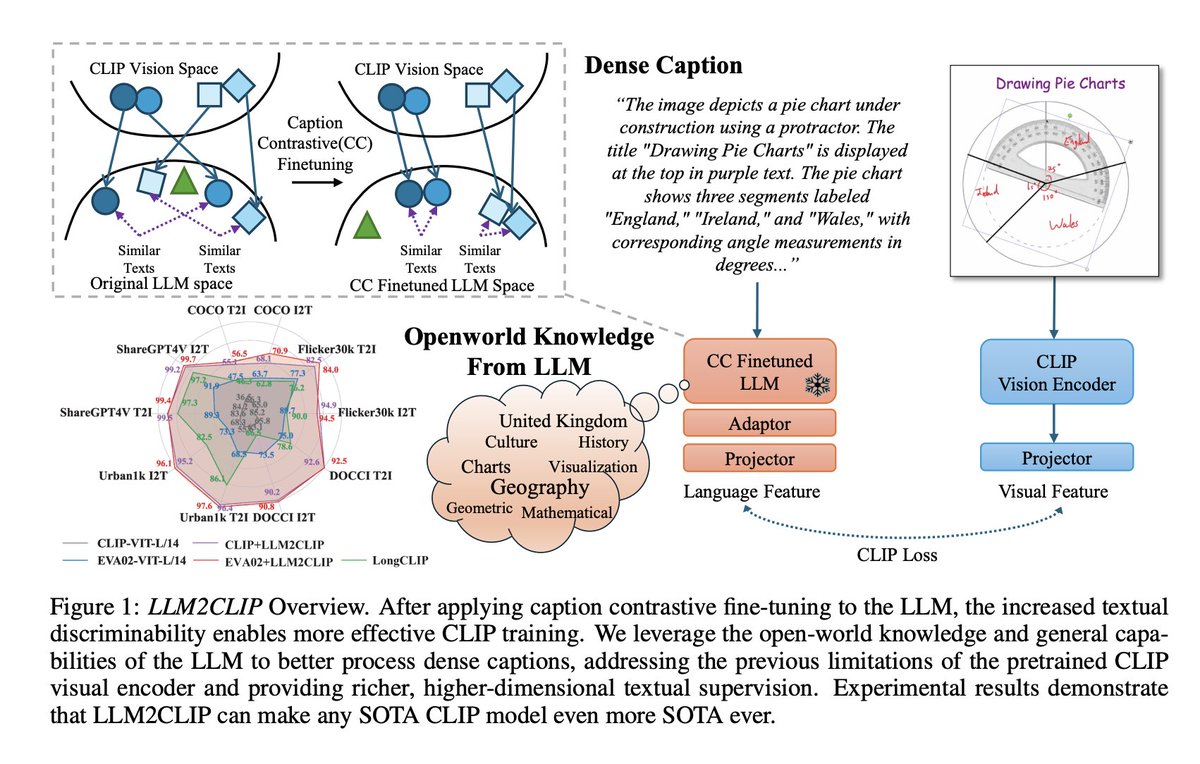

Microsoft released LLM2CLIP: a CLIP model with longer context window for complex text inputs 🤯 TLDR; they replaced CLIP's text encoder with various LLMs fine-tuned on captioning, better top-k accuracy on retrieval 🔥 All models with Apache 2.0 license on @huggingface 😍 https://t.co/xvwaWmZJj1