@mathuvu_

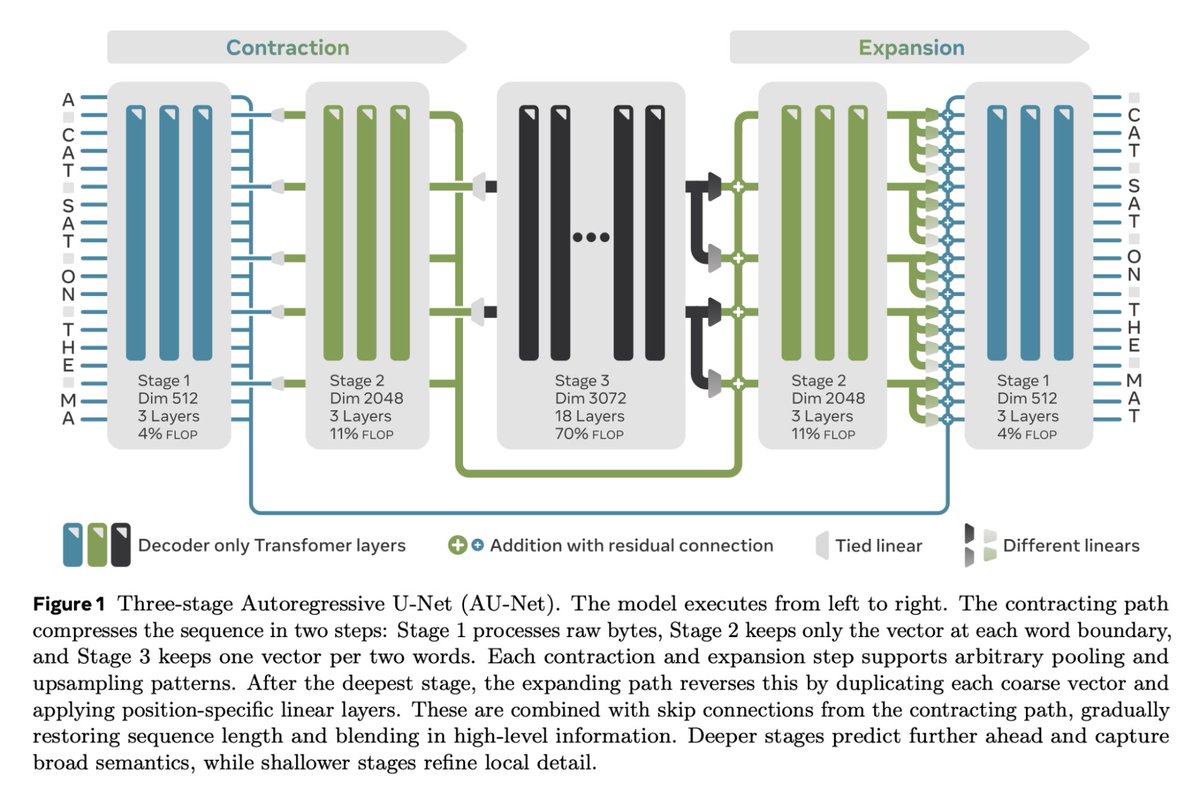

We present an Autoregressive U-Net that incorporates tokenization inside the model, pooling raw bytes into words then word-groups. AU-Net focuses most of its compute on building latent vectors that correspond to larger units of meaning. Joint work with @byoubii 1/8 https://t.co/QqQ9qb4Xfv