@DrTechlash

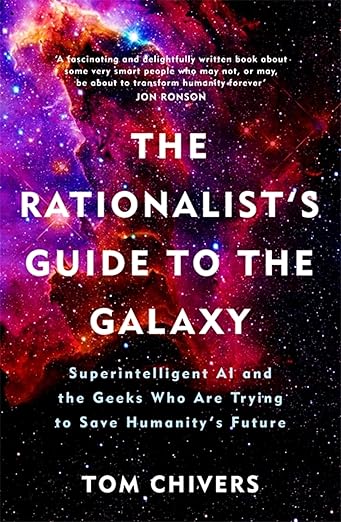

The Rationalist's Guide to the Galaxy: Superintelligent AI and the Geeks Who Are Trying to Save Humanity's Future - By Tom Chivers. The book opens with a meeting Chivers had in Berkeley with Paul Crowley, who told him, "I don't expect your children to die of old age." Chivers came from the UK to Berkeley because "over the years, I became more involved with the Rationalists. I started reading their websites; I learned the jargon, all these terms like 'paperclip maximizer' and 'Pascal’s mugging.'" "The key text – the holy book, according to those who think the whole thing is a quasi-religion – is a huge series of blog posts" by Eliezer Yudkowsky, "which came to be known as the Sequences." Having read them, Chivers provides many explainers of various rationalists' thought experiments. The story unfolds through the Extropians mailing list, Yudkowsky's SL4 (Shock Level 4) mailing list, the launch of LessWrong in 2009, Slate Star Codex in 2013, and Bostrom's "Superintelligence" book in 2014 that served as a turning point. It then describes the idea of FOOM, Roko's Basilisk hysteria, putting numbers on everything (even if they are estimates), thus thinking probabilistically and making humans better Bayesians (rational Bayesian optimizers), utilitarianism (shut up and multiply) and its effect on AI Safety (if you believe in AI existential risk), how the movement attracts man on the autistic spectrum, and the arrangement of polyamory and group homes. The "dark sides" are briefly discussed by Chivers: "They do share a lot of the surface features of a cult: a charismatic figurehead and other high-status inner-circle members; a key text that in-group members are supposed to have read, and which encodes the central tenets of their 'belief'; unorthodox sexual practices; a message of impending apocalypse, and a promise of eternal life; and a way to donate money to avoid that apocalypse and achieve paradise." He ties it to the Effective Altruism movement by quoting David Gerard: "Clearly, the most cost-effective initiative possible for all of humanity is donating to fight the prospect of unfriendly artificial intelligence, and oh look, there just happens to be a charity for that precise purpose right here! WHAT ARE THE ODDS." However, Chivers defends the movement shortly thereafter, stating that they are just "nonconformists." To find further criticism of the movement, he mentions a Reddit page called "/r/sneerclub." He does not recommend reading it. I am. Throughout the chapters, Chivers gradually embraces the notion that AI will wipe out humanity. To solve the dissonance and stress it caused, he met with Anna Salamon, president and co-founder of CFAR (Center for Applied Rationality). What was the goal of their meeting? Her "Internal Double Crux" (debugging) session. As he finished the inner debate, he broke down in tears, realizing that, indeed, he might not see his children "die of old age." Totally sold on Yudkowsky's claim that "AI will kill EVERYONE," Chivers became an even more ardent supporter of the movement. So he celebrates its achievement: "What they have achieved in terms of the AI debate is, I think, remarkable. They've taken the niche, practically dystopian-science-fiction idea of AI risk and made people take it seriously." It's no longer in the realm of "fringe nerds on an email list." To conclude, he ends his book with this sentence: "There is a small but non-negligible probability that, when we look back on this era in the future, we'll think that Eliezer Yudkowsky and Nick Bostrom – and the SL4 email list, and LessWrong – have saved the world." I enjoyed reading this book as I write my upcoming book on this topic. Just, please, tell me again, how is this NOT a doomsday cult?