@omarsar0

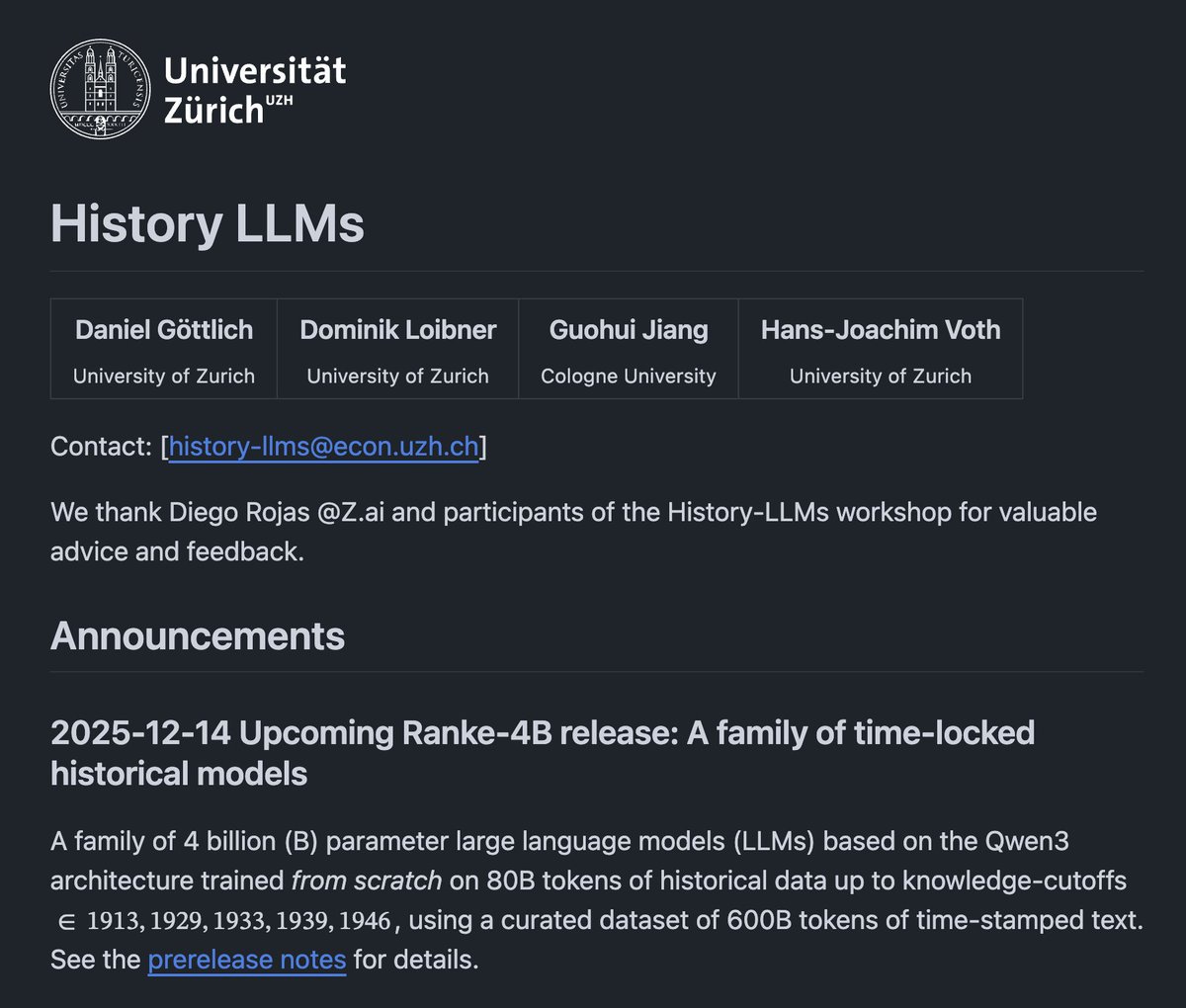

History LLMs are models trained exclusively on pre-1913 texts. Beyond the research applications, here is why this is an exciting effort for me: 1. Studying historical discourse without modern bias. These models capture what was "thinkable, predictable, or sayable" at specific moments in history. Unlike prompting modern LLMs to roleplay, these models genuinely don't know about future events because that information literally isn't in their training data. Lots of applications there. 2. Understanding historical predictions and assumptions. Researchers can explore what contemporaries expected would happen versus what actually occurred. This is useful for studying economic forecasts, political analysis, and social expectations from past eras. 3. Analyzing language and concept evolution. Track how terminology, ideas, and discourse patterns changed over time. The specific cutoff dates (1913, 1929, 1933, 1939, 1946) align with major historical inflection points (pre-WWI, Great Depression, WWII start, post-war). Heck, this might even be useful where you are using sub-agents for history-related tasks or expertise. 4. Detecting anachronisms and large-scale textual analysis. Useful for historians, writers, and filmmakers to verify period-accurate language and concepts. These models can flag modern assumptions that wouldn't exist in historical contexts. This also enables exploration of massive historical corpora in ways traditional archival research cannot. It acts as a "compressed representation" of the discourse from each era. Thoughts?