@ArtificialAnlys

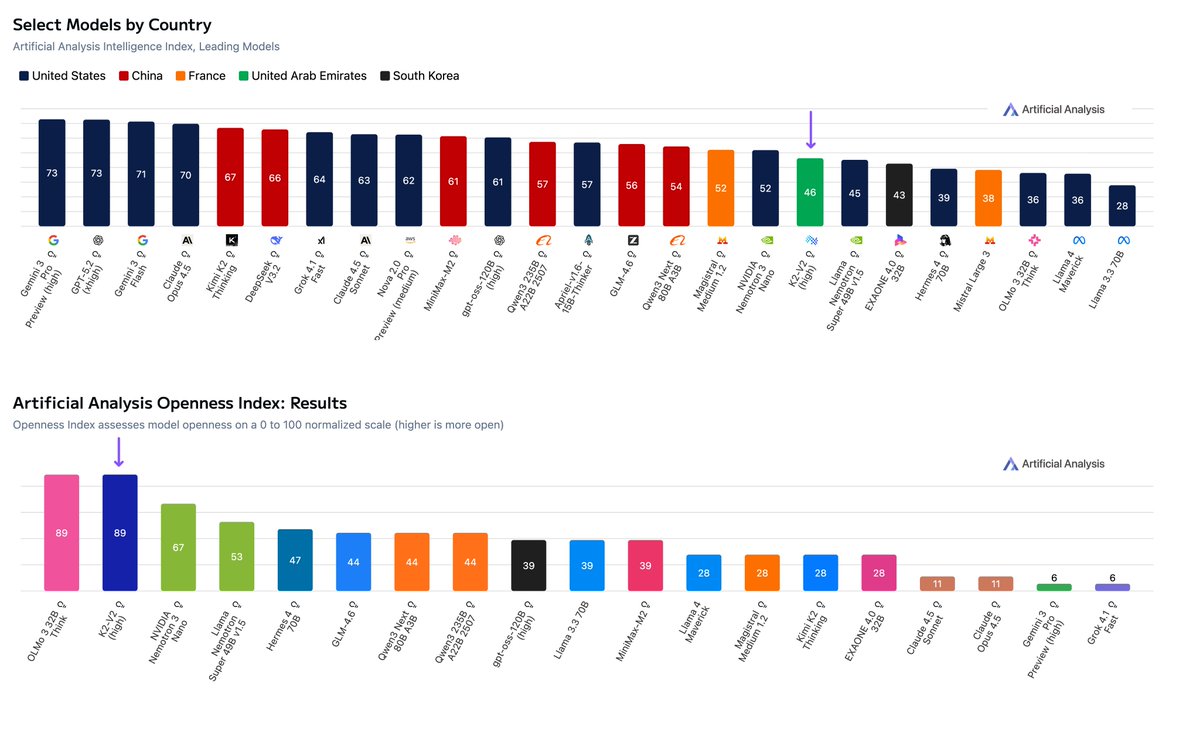

MBZUAI’s Institute of Foundation Models has released K2-V2, a 70B reasoning model that is tied for #1 in our Openness Index, and is the first model on our leaderboards from the UAE 📖 Tied leader in Openness: K2-V2 joins OLMo 3 32B Think at the top of the Artificial Analysis Openness Index - our newly released, standardized, independently assessed measure of AI model openness across availability and transparency. MBZUAI went beyond open access and licensing of the model weights - they provide full access to pre- and post-training data. They also publish training methodology and code with a permissive Apache license allowing free use for any purpose. This makes K2-V2 a valuable contribution to the open source community and allows more effective fine-tuning. See links below! 🧠 Strong medium-sized (40-150B) open weights model: At 70B, K2-V2 scores 46 on our Intelligence Index with its High reasoning mode. This puts it above Llama Nemotron Super 49B v1.5 but below Qwen3 Next 80B A3B. The model has a relative strength in instruction following with a score of 60% in IFBench 🇦🇪 First UAE entrant on our leaderboards: In a sea of largely US and Chinese models, K2-V2 stands out as the first representation of the UAE in our leaderboards, and the second entrant from the Middle East after Israel’s AI21 labs. K2-V2 is the first MBZUAI model we have benchmarked, but the lab has previously released models with a particular focus on language representation including Egyptian Arabic and Hindi 📊 Lower reasoning modes reduce token use & hallucination: K2-V2 has 3 reasoning modes, with the High reasoning mode using a substantial ~130M tokens to complete our Intelligence Index. However, the Medium mode reduces token usage by ~6x with only a 6pt drop in our Intelligence Index. Interestingly, lower reasoning modes score better in our knowledge and hallucination index, AA-Omniscience, due to a reduced tendency to hallucinate