@SakanaAILabs

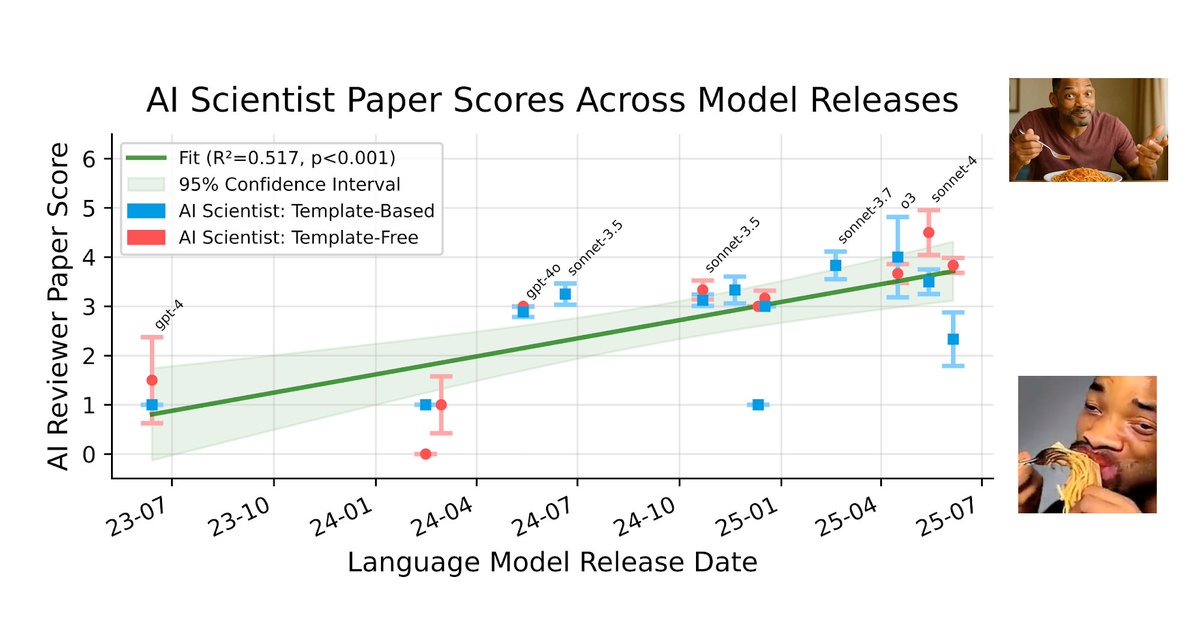

One of the most exciting findings in our @Nature paper is the discovery of a clear scaling law of AI science. By using our Automated Reviewer to grade papers generated by different foundation models, we observed that as the underlying models improve, the quality of the generated scientific papers increases correspondingly. Crucially, we expect this scaling to work in two ways: through more capable foundation models, and by scaling up compute during inference. This implies that as compute costs decrease and model capabilities continue to increase, future versions of AI Scientists will be substantially more capable. You can see this trend in the attached chart and read the full open access paper (PDF) here: https://t.co/G8Zebzsf2O