@itzik009

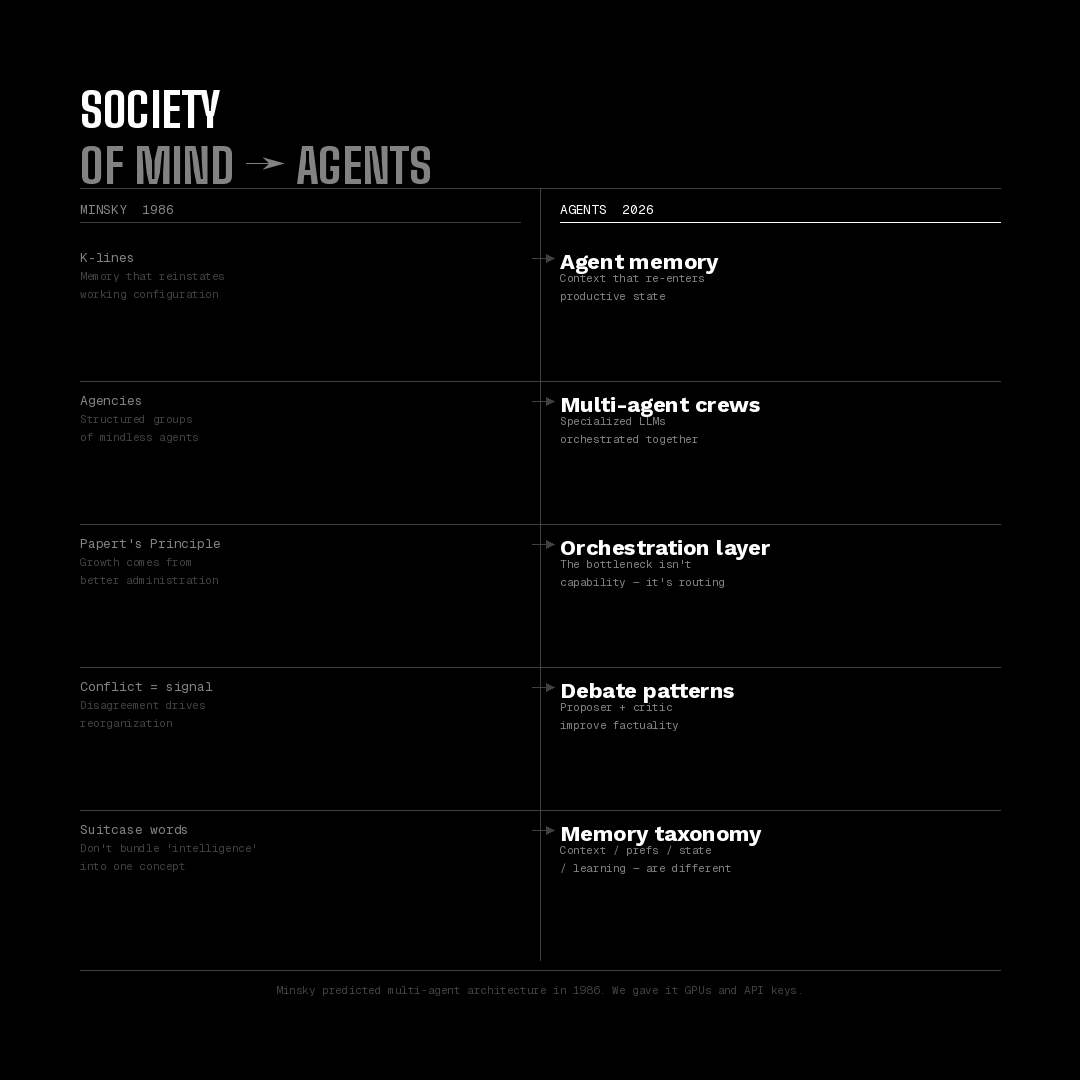

Your AI agent remembers everything. It understands nothing. Meta paid $2 billion for an AI memory startup. Their secret weapon? A todo list written in markdown. That sentence sounds absurd. It's directionally true. And it tells you everything about where agent memory actually is right now. Here's the real problem. "Memory" in AI means four completely different things: -Short-term context (what's in the window right now) - Persistent preferences (you like Python over JavaScript) - Session state (what the agent already tried) - Learned knowledge (genuine understanding that updates over time) Most products ship the first two. Some do the third a bit. Almost nobody does the fourth. But every marketing page reads like they do. Marvin Minsky saw this coming in 1986. His Society of Mind - a 50-year-old blueprint we keep rediscovering, made one thing clear: The hard part was never generating answers. It's building the administrative layer that decides which answers win. K-lines. His proto-memory model. The idea that remembering isn't retrieval of a static record - it's reinstatement of a working configuration. Not "what happened last time." But "how do I re-enter the productive state." That's the difference between storage and understanding. The midbrain solves this with emotion. Fear. Curiosity. Reward. Loss. Emotion is the salience filter. It decides what's worth consolidating from episodic → semantic → procedural memory. Current agents have no equivalent. Every token weighted the same. No filter. No signal. Just noise that compounds. This is why the best agent memory systems keep landing on embarrassingly simple solutions. Markdown files. Filesystem state. Explicit plan documents re-read before every step. this is not just because it's elegant. Because without a consolidation layer - nothing more sophisticated actually works. The real frontier isn't better retrieval. It's four unsolved problems: Consolidation — merging experiences into generalized knowledge, not just storing every interaction as a separate record Contradiction handling — when new information conflicts with old, update beliefs don't just append both versions Selective forgetting — an agent that stores everything drowns in its own history Transfer — knowledge from one context should inform behavior in another None of these are solved. Most are barely being worked on outside academia. Society is just memory at scale. Laws = procedural memory Culture = emotional memory History = episodic memory Science = semantic memory When AI agents develop the ability to consolidate - not just store - they won't just be better tools. They'll be participants in collective intelligence. Test our memory and learning system: https://t.co/nG7Nayirlx The question isn't whether agents can remember. It's whether we build the layer that decides what's worth understanding. From noise to signal.