@realJessyLin

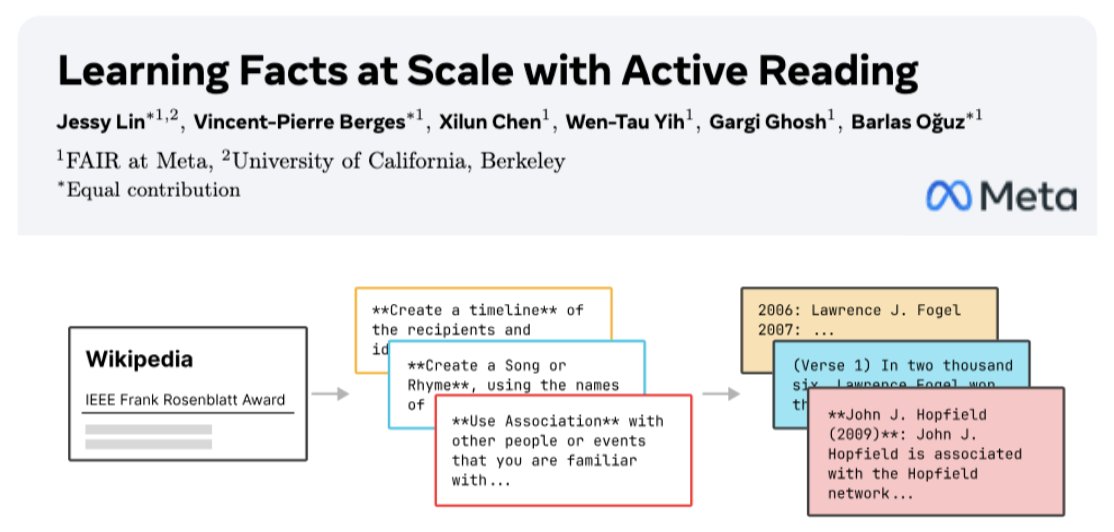

🔍 How do we teach an LLM to 𝘮𝘢𝘴𝘵𝘦𝘳 a body of knowledge? In new work with @AIatMeta, we propose Active Reading 📙: a way for models to teach themselves new things by self-studying their training data. Results: * 𝟔𝟔% on SimpleQA w/ an 8B model by studying the wikipedia docs (+𝟑𝟏𝟑% vs plain finetuning) * a domain-specific expert model: 𝟏𝟔𝟎% vs FT on FinanceBench knowledge * an 8B wikipedia expert competitive w/ 405B on factuality (💥open-sourced!) 🧵[1/n]