Your curated collection of saved posts and media

proj: https://t.co/fCl3QBpnoE abs: https://t.co/i50YCzUKax repo: https://t.co/ml7dOTqYy1

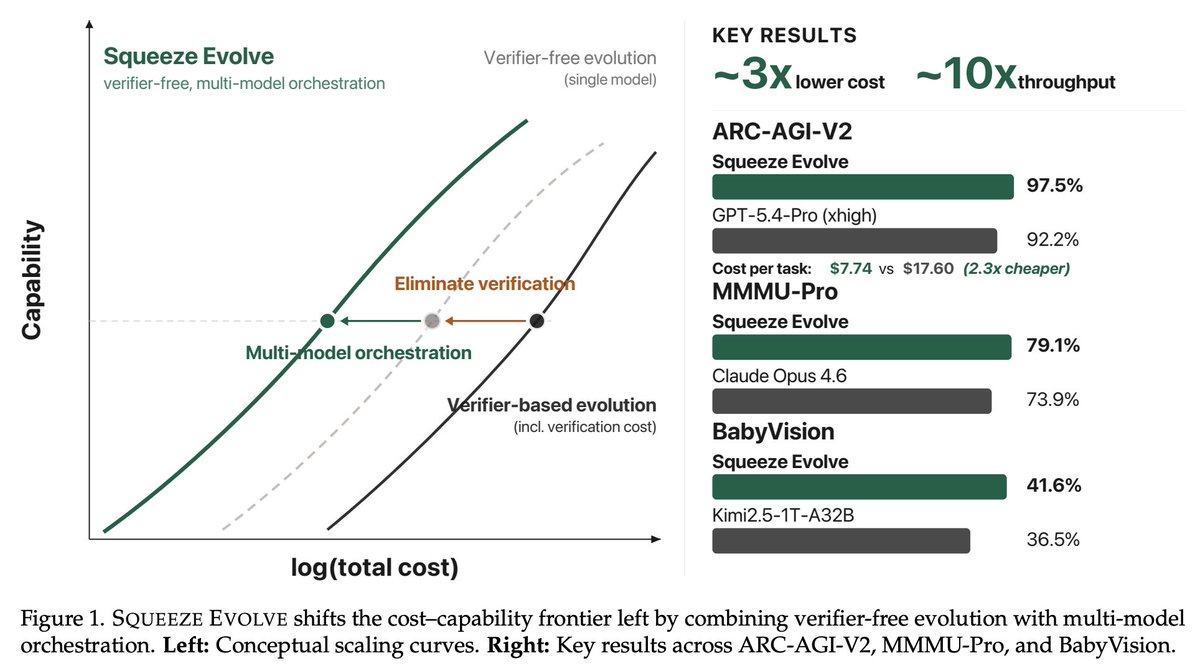

Squeeze Evolve: A Unified Framework for Verifier-Free Evolution Across AIME 2025, GPQA-Diamond, ARC-AGI-V2, MMMU-Pro, etc: - Up to ~3x API cost reduction - Up to ~10x increase in fixed-budget serving throughput https://t.co/MoTgGNAYns

@plainionist The industry has misled the public with terms like “In-Context Learning”, “Skills” or “Reasoning”. It’s trivial to prove no real learning is happening: the model’s weights are never changed. Remove the context, and the alleged “learning” disappears instantly. Puff.

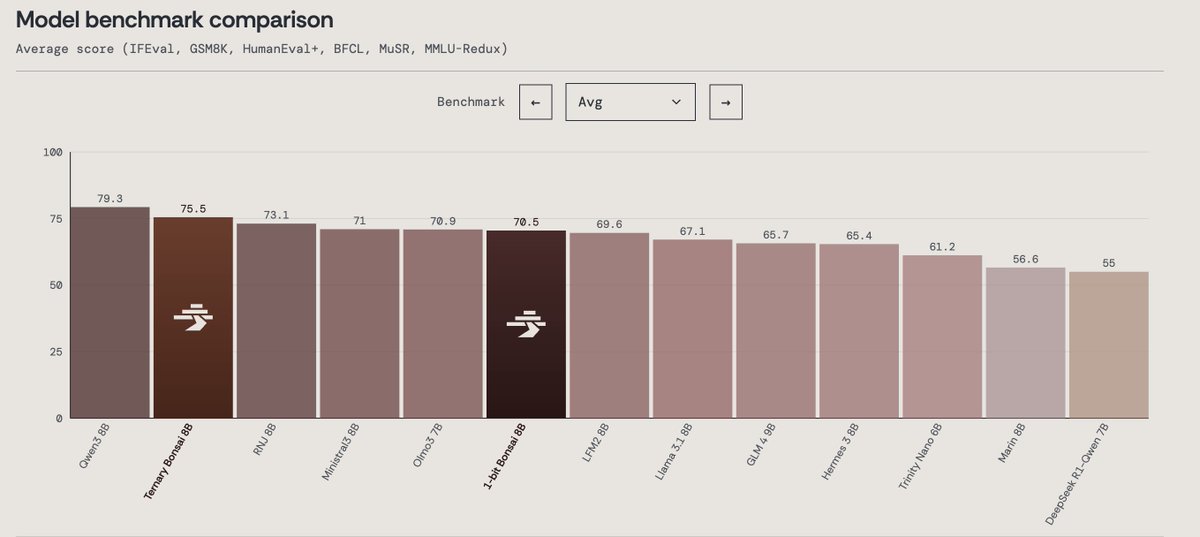

Today we’re announcing Ternary Bonsai: Top intelligence at 1.58 bits Using ternary weights {-1, 0, +1}, we built a family of models that are 9x smaller than their 16-bit counterparts while outperforming most models in their respective parameter classes on standard benchmarks. We’re open-sourcing the models under the Apache 2.0 license in three sizes: 8B (1.75 GB), 4B (0.86 GB), and 1.7B (0.37 GB).

Qwen3.6-35B-A3B just dropped. Red Hat AI has an NVFP4 quantized checkpoint ready. 35B params, 3B active, quantized with LLM Compressor. Preliminary GSM8K Platinum: 100.69% recovery (slightly above baseline). Early release. Let us know what you think! https://t.co/i5Fc4P7NVN

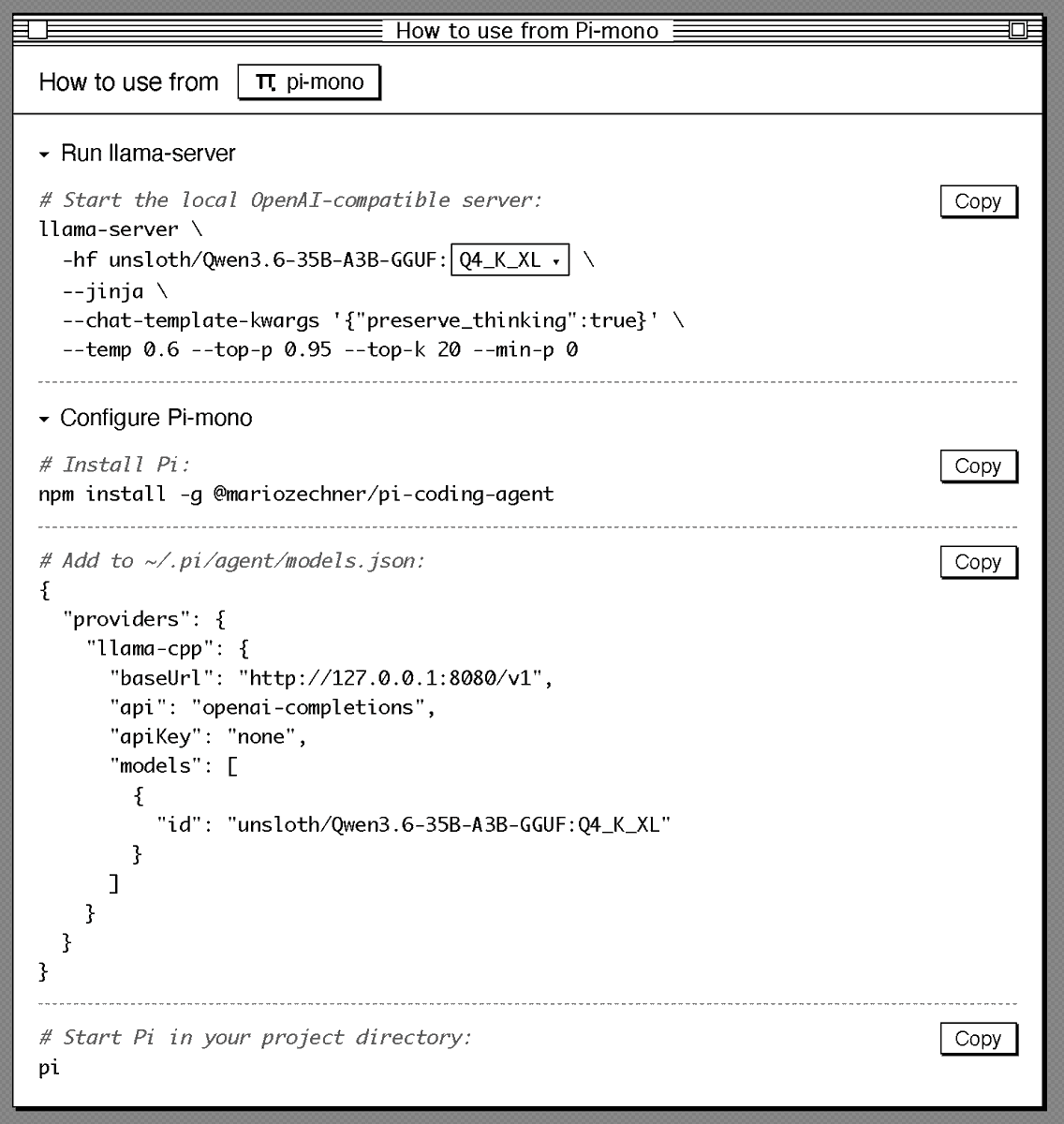

Sharing my current setup to run Qwen3.6 locally in a good agentic setup (Pi + llama.cpp). Should give you a good overview of how good local agents are today: # Start llama.cpp server: llama-server \ -hf unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL \ --jinja \ --chat-template-kwargs '{"preserve_thinking":true}' \ --temp 0.6 --top-p 0.95 --top-k 20 --min-p 0 # Configure Pi: { "providers": { "llama-cpp": { "baseUrl": "http://127.0.0.1:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL" } ] } } }

⚡ Meet Qwen3.6-35B-A3B:Now Open-Source!🚀🚀 A sparse MoE model, 35B total params, 3B active. Apache 2.0 license. 🔥 Agentic coding on par with models 10x its active size 📷 Strong multimodal perception and reasoning ability 🧠 Multimodal thinking + non-thinking modes Efficient. Pow

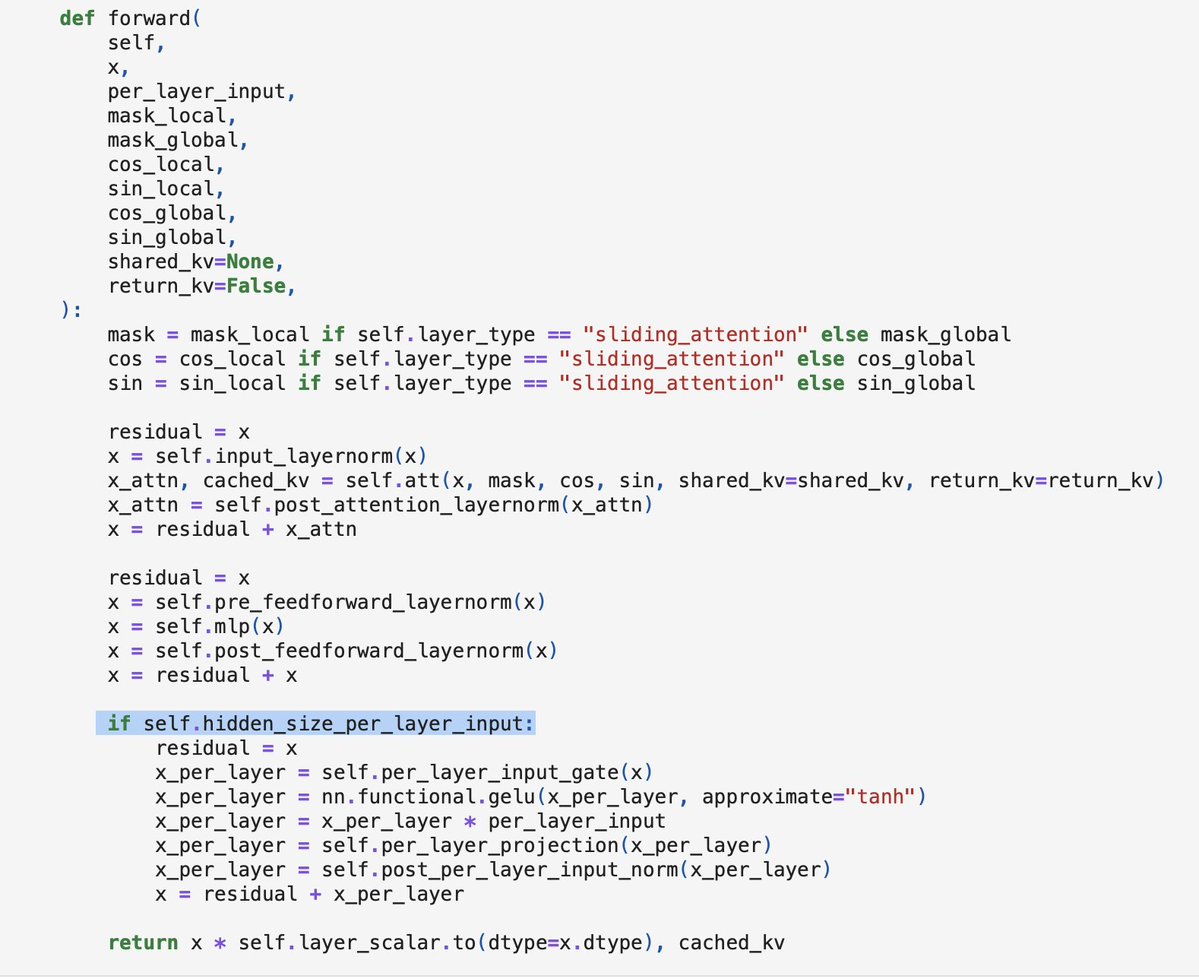

@DanielWulikk Have to think a bit about how to best visualize it, but if you are interested, I have a working from-scratch code implementation of Gemma 4 E2B in the meantime to see how per-layer embeddings are implemented: https://t.co/jyiq1vyJnH https://t.co/fVrSBWHNHl

Introducing gyaradax 🐉: A JAX solver for local flux-tube gyrokinetics with custom CUDA kernels for acceleration. This entire code was vibecoded by @ggalletti_ and me in a month. Validated against GKW (CPU-only Fortran code) with 10x speedups. Details and code in the replies. https://t.co/22PrHjItR5

DMax Aggressive Parallel Decoding for dLLMs paper: https://t.co/y421NkegRD https://t.co/Y7Ut9Gxly8

abs: https://t.co/tSLRNXAgY3 code: https://t.co/ldWVGmuC4O blog post: https://t.co/yI0NmdaKHm